Your support is needed and is appreciated as Amigaworld.net is primarily dependent upon the support of its users.

|

|

|

|

| Poster | Thread |  MEGA_RJ_MICAL MEGA_RJ_MICAL

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 26-Jul-2022 0:02:49

| | [ #41 ] |

| |

|

Super Member

|

Joined: 13-Dec-2019

Posts: 1200

From: AMIGAWORLD.NET WAS ORIGINALLY FOUNDED BY DAVID DOYLE | | |

|

| | | Status: Offline |

| |  matthey matthey

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 26-Jul-2022 0:10:13

| | [ #42 ] |

| |

|

Super Member

|

Joined: 14-Mar-2007

Posts: 1999

From: Kansas | | |

|

| Hans Quote:

Interesting topic.

RISC-V's future looks promising. There's a lot of interest in the AI field, partially because they can tailor the CPU for specific tasks, and optimize the power consumption.

The open-source nature has it's good and bad sides. One potential risk, is that the CPU architecture ends up with as many variants as Linux has distros, effectively killing binary compatibility. It already sucks that we can't rely on all new PowerPC CPUs having altivec (or even a standard FPU). JIT recompilation could address this problem at the application level, but it'll likely still be a pain for low-level kernel & driver code.

If we were to switch architecture today, then I'd choose ARM. ARM is making genuine inroads into the desktop market (e.g., Apple M1/M2), and has a real chance of knocking x86/x64 off its desktop perch. It already dominates in mobile, has gigantic momentum behind it, and a huge ecosystem of software and tools. This includes GPUs.**

RISC-V would be a riskier bet at this stage. It's growing, but there are a lot of unknowns. The laptop project you linked to says it'll have a GPU/NPU, but I don't know of a single RISC-V SOC that has a GPU on-board. A lot still needs to be developed, and it's not clear which computing niche RISC-V is going to dominate. There's no guarantee that it will oust ARM or x64 in the mobile or desktop arenas at all, let alone soon.

|

Ironically, RISC-V is used or being evaluated by several top GPU developers including Nvidia, Imagination Technologies and Intel for the "management" processor. Nvidia and Imagination Technologies are likely using RISC-V processors in current GPU products though they may not disclose which particular products are using RISC-V. Imagination Technologies further claims their GPUs are compatible with RISC-V CPUs.

https://www.theregister.com/2021/12/09/gpus_for_riscv/ Quote:

Imagination Technologies, which this week re-entered the CPU market with a RISC-V processor called Catapult, said its GPUs will work with RISC-V. The company's PowerVR graphics cores were used by Apple in iPhones, and are popular in mobile devices with chips from companies including MediaTek.

"There is no reason why you could not integrate C-series -- which is the part that has ray tracing -- with RISC-V," David Harold, chief marketing officer at Imagination, told The Register.

Andes Technology, which creates RISC-V chip designs, has verified that Imagination's GPUs work with RISC-V, and so has RIOS Lab, which has David Patterson, vice chair of the Board at RISC-V Foundation, on staff.

|

The last sentence infers that there may be unreleased RISC-V SoCs with 3D GPUs. There was a RISC-V evaluation SoC with a GPU capable of 3D which was made in very limited supplies.

https://www.aliexpress.com/item/3256803209663707.html?gatewayAdapt=4itemAdapt

It's not clear what GPU was used and the likely Chinese to English translation of technical information is more funny than usable. The risk of producing a RISC-V SoC with GPU for a small market is really the only issue much like creating a SoC for the Amiga market.

There is also the advancement of the Libre project with the CPU using a vector unit with RISC-V ISA vector extensions as a GPU.

https://www.eetimes.com/rv64x-a-free-open-source-gpu-for-risc-v/

A GPU like this is closer to the Commodore Hombre 3D solution with specialized SIMD instructions for 3D although it used a SIMD unit instead of vector unit. Mitch Alsup who was a 68k and 88k CPU developer (the 88k CPU SIMD unit had basic 3D SIMD instructions) helped design the Libre project vector unit. It appears Think Silicon is offering a scalable 3D GPU called NEOX based on these RISC-V vector extensions.

https://www.think-silicon.com/neox-graphics

This is one way to achieve a standard and open GPU without the need for an intermediate shader language and processing like SPIR-V and avoids the need for a HSA which should significantly reduce the system footprint and improve efficiency in several ways. The target of such a system is to scale down further than current CPU+GPU combos which this should provide but the big question is how well it will scale up. Using inherently weak RISC-V CPU cores would allow massively parallel SIMD performance but limit CPU single core integer performance which is vital for most games. Similar SIMD extensions could be used with a more powerful architecture for integer performance. The Larrabee microarchitecture used low power in order cores based on the Pentium P54C design released circa 1994 which competed with the 68060 but was not able to scale down the power and area enough to be used as a competitive GPU or GPGPU (the 68060 had significantly better PPA than this Pentium CPU).

https://en.wikipedia.org/wiki/Larrabee_(microarchitecture)

Larrabee used a SIMD unit instead of vector but both are flexible enough for 3D and even ray tracing as the link above states.

Quote:

A public demonstration of the Larrabee ray-tracing capabilities took place at the Intel Developer Forum in San Francisco on September 22, 2009. An experimental version of Enemy Territory: Quake Wars titled Quake Wars: Ray Traced was shown in real-time. The scene contained a ray traced water surface that reflected the surrounding objects, like a ship and several flying vehicles, accurately.

A second demo was given at the SC09 conference in Portland at November 17, 2009 during a keynote by Intel CTO Justin Rattner. A Larrabee card was able to achieve 1006 GFLops in the SGEMM 4Kx4K calculation.

|

It would be interesting to hear your thoughts on using a vector or SIMD unit in the CPU cores with GPU extensions.

Karlos Quote:

The ISA for RISC-V is well organised into capability levels, so I don't think the binary compatibility fragmentation is going to be as bad. It's not in anybody's interest to make an incompatible part. Extra instructions and features and reliance on those will lock together certain variants and their software and there may be many good reasons for that in some use cases. |

The old ARM extensions before AArch64 standardization were a pain and created cross compatibility issues in Android enough to require an intermediate language. At the same time, the ability to customize and scale down cores for low power and area was key to the success of ARM for embedded applications. This is no different than RISC-V which has chosen optional extensions to scale lower at the expense of better compatibility. Most RISC-V wins have been for embedded applications if not deeply embedded applications and not the desktop. RISC-V can scale down very well but I have doubts that it can scale up for competitive performance on desktops, laptops, pads, etc. RISC-V is basically MIPS with a few modern improvements and baggage from mistakes thrown away. The more open ISA and trend toward open hardware are more appealing than the ISA.

|

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 26-Jul-2022 2:31:12

| | [ #43 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 5273

From: Australia | | |

|

| @xe54

NVIDIA's Turing already includes customized RISC-V, but it's hidden. For the desktop PC market, RISC-V's lack of standard HAL and boot firmware adherence dooms RISC-V like the fragmented 68K and PPC markets.

Last edited by Hammer on 26-Jul-2022 at 02:31 AM.

_________________

Ryzen 9 7900X, DDR5-6000 64 GB RAM, GeForce RTX 4080 16 GB

Amiga 1200 (Rev 1D1, KS 3.2, PiStorm32lite/RPi 4B 4GB/Emu68)

Amiga 500 (Rev 6A, KS 3.2, PiStorm/RPi 3a/Emu68) |

| | Status: Offline |

| |  Hans Hans

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 26-Jul-2022 2:36:03

| | [ #44 ] |

| |

|

Elite Member

|

Joined: 27-Dec-2003

Posts: 5067

From: New Zealand | | |

|

| @matthey

Quote:

Is this GPU ready to go? Or is this one of those "brochure sites" that are fishing to see if there's enough interest to actually develop it? I can't tell.

Also, do note that their fastest NEOX GPU is slower than the slowest Radeon HD Southern Islands GPU in terms of GFLOPS. It's probably competitive with the mobile GPUs in phones, although there are ARM Mali GPUs that can match the low-end of desktop GPUs.

If we did switch to ARM or RISC-V, we'd have to accept at least a temporary drop in GPU performance either way (not that we're getting max performance right now...).

Quote:

It would be interesting to hear your thoughts on using a vector or SIMD unit in the CPU cores with GPU extensions. |

It reminds me of when the PowerPC Cell processor came out, and there was some research into into using the Cell cores as a GPU instead of having a dedicated one. The PS3 added a proper GPU in the end, and I see that Intel's Larrabee was cancelled. So far, nobody has been able to make it work better than existing GPUs.

Having a dedicated GPU with hardware for texture & vertex fetches, rasterization, etc., is simply faster and more efficient at graphics.** A separate GPU also allows the CPU to do other tasks while the GPU processes the graphics.

AFAIK, both AMD and nVidia use Single Instruction Multiple Thread (SIMT) architectures in their GPUs. They have large register files***, and multiple threads running in lockstep. It's a nightmare to program, but having threads in lockstep improves cache & memory bandwidth usage, because you have threads for adjacent pixels reading from areas close in memory rather than more random access.

If I were to design a GPU (which I have thought about), I'd probably go for an SIMTish architecture, with each core being as simple and small as possible (which rules out SIMD). I'd try to make the ISA easier to program, whilst still maintaining some sort of sync between adjacent threads like SIMT does. Adding features like SIMD increases the amount of silicon per core, and I suspect that having more threads should work better for graphics than having cores that can do more per clock. This is just a hunch though. I have no data to back this up, and could be wrong.

Hans

** At least, for rasterized graphics. Raytracing is a different game, where the random nature of shooting rays through the scene can make it hard to efficiently use the cache and memory bandwidth. IIRC, some raytracers sort rays by direction in order to reduce this problem.

*** The register files are so large, that Warp3D Nova shaders use only registers for all shaders. The compiler currently doesn't have the ability to spill registers to VRAM.Last edited by Hans on 26-Jul-2022 at 02:38 AM.

_________________

http://hdrlab.org.nz/ - Amiga OS 4 projects, programming articles and more. Home of the RadeonHD driver for Amiga OS 4.x project.

https://keasigmadelta.com/ - More of my work. |

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 26-Jul-2022 2:40:03

| | [ #45 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 5273

From: Australia | | |

|

| @matthey

Quote:

Ironically, RISC-V is used or being evaluated by several top GPU developers including Nvidia, Imagination Technologies and Intel for the "management" processor. Nvidia and Imagination Technologies are likely using RISC-V processors in current GPU products though they may not disclose which particular products are using RISC-V. Imagination Technologies further claims their GPUs are compatible with RISC-V CPUs.

|

NVIDIA Turing and Ampere SKUs already have customized RISC-V, but it's hidden from application programmers.

RISC-V existence in Turing and Ampere is to lower the host CPU's driver workload since AMD's host CPU RDNA driver workload is less than NVIDIA's.

RISC-V's lack of standard HAL and Boot environment adherence renders RISC-V adventure like fragmented 68K and PPC market.

ARM-based Raspberry Pi platform follows the old-school low-cost "$99" Spectrum ZX model.

Raspberry Pi can run the Genshin Impact test ... slowly...

Last edited by Hammer on 26-Jul-2022 at 02:52 AM.

_________________

Ryzen 9 7900X, DDR5-6000 64 GB RAM, GeForce RTX 4080 16 GB

Amiga 1200 (Rev 1D1, KS 3.2, PiStorm32lite/RPi 4B 4GB/Emu68)

Amiga 500 (Rev 6A, KS 3.2, PiStorm/RPi 3a/Emu68) |

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 26-Jul-2022 2:49:17

| | [ #46 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 5273

From: Australia | | |

|

| @xe54

Quote:

Makes me laugh that one of the greatest modern upgrades for AMIGAs is the PI-zero (pistorm project) which uses an ARM processor (but only in the "good" ways) yet those developers aren't arguing about endians or CPUS. They looked at price, performance and accessibility rather than market size and potential CPU partners.

|

FYI, Pi Storm's Pi Zero 2's and Pi 3A+'s ARM Cortex A53 supports both big and little endian formats

ARM cores support both modes, but are most commonly used in, and typically default to little-endian mode(1)

Reference

1. https://developer.arm.com/documentation/den0013/d/Porting/Endianness

I have Pi Storm and Pi 3A+ for my A500 and other interests.

Last edited by Hammer on 27-Jul-2022 at 04:53 AM.

Last edited by Hammer on 26-Jul-2022 at 02:49 AM.

_________________

Ryzen 9 7900X, DDR5-6000 64 GB RAM, GeForce RTX 4080 16 GB

Amiga 1200 (Rev 1D1, KS 3.2, PiStorm32lite/RPi 4B 4GB/Emu68)

Amiga 500 (Rev 6A, KS 3.2, PiStorm/RPi 3a/Emu68) |

| | Status: Offline |

| |  Trekiej Trekiej

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 26-Jul-2022 3:53:31

| | [ #47 ] |

| |

|

Cult Member

|

Joined: 17-Oct-2006

Posts: 890

From: Unknown | | |

|

| @xe54

Would it be worth adding a special cache to a cpu to hold Emulated Code?

_________________

John 3:16 |

| | Status: Offline |

| |  NutsAboutAmiga NutsAboutAmiga

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 26-Jul-2022 10:58:21

| | [ #48 ] |

| |

|

Elite Member

|

Joined: 9-Jun-2004

Posts: 12817

From: Norway | | |

|

| @Trekiej

I dont see how that will help, JIT does not change the silicon, it only takes one instruction and find its equivalent in another instruction set, (if no equivalent is found several instructions might be needed), it works similar to a compiler, this why you see high CPU spikes, caused by JIT having to translate an unconverted section.

once the code section is converted, its instruction cache is used while executed the converted code.

the JIT will be trigged when run out of JIT cache (temp place for converted code), or when run a part that is not yet converted.

there is 3 types of branched, conditional branch, and indirect and direct branch,

indirect branches are unpredictable, conditionals and direct branches are predictable.

the places where unpredictable outcome will need to tested (slower).

if you map registers 1 to 1, you can generate more efficient code, but if you have a host OS, some of the registers might be reserved, if no registers can be used, it need to map registers to memory, this where you have biggest loss, in addition if host CPU, does not read and write byes in the same order, you need to worry about that.

You have two things is important, how fast you convert the code, and how good quality the result of the converted code is. This are the main issues, to worry about.

Once you have overwritten JIT cache you need to cache flush, it might good if you can divide the instruction cache up, so never need to flush JIT compiler, but only ever used when need to convert new code, and CPU cache is not unlimited anyway. I doubt you be able fit the full JIT compiler in the instruction cache.

On multi core cpu, the instruction cache is already divided by core.

you can take advantage of that, maybe.

Last edited by NutsAboutAmiga on 26-Jul-2022 at 05:13 PM.

Last edited by NutsAboutAmiga on 26-Jul-2022 at 11:18 AM.

Last edited by NutsAboutAmiga on 26-Jul-2022 at 11:05 AM.

Last edited by NutsAboutAmiga on 26-Jul-2022 at 11:03 AM.

_________________

http://lifeofliveforit.blogspot.no/

Facebook::LiveForIt Software for AmigaOS |

| | Status: Offline |

| |  AmigaNoob AmigaNoob

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 26-Jul-2022 15:23:12

| | [ #49 ] |

| |

|

Member

|

Joined: 14-Oct-2021

Posts: 15

From: Unknown | | |

|

| | | Status: Offline |

| |  matthey matthey

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 26-Jul-2022 22:27:50

| | [ #50 ] |

| |

|

Super Member

|

Joined: 14-Mar-2007

Posts: 1999

From: Kansas | | |

|

| Hans Quote:

Is this GPU ready to go? Or is this one of those "brochure sites" that are fishing to see if there's enough interest to actually develop it? I can't tell.

|

NEOX may be fishing but Think Silicon has demonstration hardware videos with their NEMA FPGA GPUs which use VLIW for a classic CPU+GPU configuration (ARM CPU cores used).

https://www.youtube.com/user/ThinkSilicon

NEOX GPUs are mentioned and a watch is shown but that looks like marketing fluff. The NEMA GPU hardware appears to be real and specs are mentioned so the company may know what they are doing. There was supposedly a NEOX demonstration at Embedded World 2022 in Nuremberg, Germany but I can't find any videos. There are claims that the NEOX GPU is further developed than the Libre GPU which was planning test silicon as I recall.

https://wccftech.com/think-silicon-displays-first-low-powered-risc-v-3d-gpu-at-embedded-world-2022/ Quote:

Michael Larabel of Linux website Phoronix details a previous community-based GPU design, the "Libre RISC-V," which would have the same essentials through a system-on-chip design as Think Silicon. The project is still in development and is not as close as Think Silicon's current plans. Larabel states that the Libre RISC-V will utilize the OpenPOWER ISA, "a reduced instruction set computer (RISC) instruction set architecture (ISA) currently developed by the OpenPOWER Foundation," which is currently overseen by IBM. The project was initially developed by IBM and the Power.org industry group, which is now presently defunct.

|

Hans Quote:

Also, do note that their fastest NEOX GPU is slower than the slowest Radeon HD Southern Islands GPU in terms of GFLOPS. It's probably competitive with the mobile GPUs in phones, although there are ARM Mali GPUs that can match the low-end of desktop GPUs.

|

There is a night and day difference between a GPU designed for performance and low power. Except for the high end desktop GPU market, most hardware has better CPU+GPU integration and power efficiency rather than brute force GPU hardware parallelism. Most computer hardware isn't even for the desktop anymore which is why I think GPUs like Innovation Technologies new low power ray tracing GPUs and even Think Silicon's GPUs have the potential to disrupt the status quo in the GPU market. They do need mass produced devices which make their products standard like a new Raspberry Pi using the Innovation Technologies ray tracing GPUs for example. The Raspberry Pi Foundation is fabless designing there own SoCs now so this could be where they are headed already. A more open system could be a low cost competitor for consoles while also allowing general purpose computing that they do not allow. This is kind of like the classic Amiga was a general purpose computer but was low cost enough to compete with consoles back in the day though CBM could have done a better job of continuing to improve, integrate, cost reduce and reduce the power to better compete. The original Amiga had the CPU+GPU integrated like is becoming popular today but 3D support is now standard even on many mobile devices.

Hans Quote:

If we did switch to ARM or RISC-V, we'd have to accept at least a temporary drop in GPU performance either way (not that we're getting max performance right now...).

|

With RISC-V, there may be a drop in CPU performance as well. RISC-V relies on core designs to add back performance lost by the simple and weak ISA. Also, most off the shelf RISC-V chips are currently using an older chip fabrication process due to lack of demand and low production. I agree that ARM is more appealing if using off the shelf chips, especially for Amiga use. RISC-V cores have advantages for low cost low power cores for custom mass produced SoCs which are becoming more and more popular for devices (but then 68k or PPC cores would be better for Amiga compatibility).

Hans Quote:

It reminds me of when the PowerPC Cell processor came out, and there was some research into into using the Cell cores as a GPU instead of having a dedicated one. The PS3 added a proper GPU in the end, and I see that Intel's Larrabee was cancelled. So far, nobody has been able to make it work better than existing GPUs.

|

The Cell CPU didn't have enough SPEs for a high performance GPU. Detaching SIMD units from the integer CPU cores should have allowed for more of them at the expense of more difficulty in programming. Perhaps Cell and Larrabee did not have specialized enough SIMD "GPU" instructions? Perhaps the RV64X vector ISA extension has more GPU specific instructions? Perhaps the RISC-V integer cores are small enough area that enough more cores can be added to be competitive in GPU performance albeit with weakened single core CPU performance?

Hans Quote:

Having a dedicated GPU with hardware for texture & vertex fetches, rasterization, etc., is simply faster and more efficient at graphics.** A separate GPU also allows the CPU to do other tasks while the GPU processes the graphics.

|

The increased parallelism of GPU coprocessors is partially offset by the cache coherency inefficiencies, memory copying overhead and increased memory usage which is significant, especially for low power GPUs. There is a reason why HSA is used by consoles, Nvidia has GPUDirect and most GPU designers have memory zero copy technologies.

Hans Quote:

AFAIK, both AMD and nVidia use Single Instruction Multiple Thread (SIMT) architectures in their GPUs. They have large register files***, and multiple threads running in lockstep. It's a nightmare to program, but having threads in lockstep improves cache & memory bandwidth usage, because you have threads for adjacent pixels reading from areas close in memory rather than more random access.

If I were to design a GPU (which I have thought about), I'd probably go for an SIMTish architecture, with each core being as simple and small as possible (which rules out SIMD). I'd try to make the ISA easier to program, whilst still maintaining some sort of sync between adjacent threads like SIMT does. Adding features like SIMD increases the amount of silicon per core, and I suspect that having more threads should work better for graphics than having cores that can do more per clock. This is just a hunch though. I have no data to back this up, and could be wrong.

|

SIMD is simple to implement and has a very low cost for integer datatypes. I would expect multithreading would be important for keeping the GPU cores working during long thread stalls. SIMT does sound like a pain to program though. Cache and memory optimization would be difficult for sure.

AmigaNoob Quote:

We have talked about the Libre project and the schism with the RISC-V developers on this forum before. A similar vector extension can go with any integer ISA as I mentioned before. I don't recall what differences of the Libre vector extension caused the schism. I believe the Think Silicon NEOX GPU is using the Libre vector extension and encoding which is called RV64X. Think Silicon probably picked it up as it is an open source design and there is a professionally implemented reference design (Mitch Alsup assisted in the design). There is more information on RV64X and info on the reference design in the following thread.

https://www.linuxadictos.com/en/rv64x-una-gpu-de-codigo-abierto-basada-en-tecnologias-risc-v.html

AmigaNoob Quote:

Isn't he only from the 88k team? |

I believe Mitch Alsup was part of the 68k development team although not part of the original 68000 team. The information I have is hearsay but I have heard it from separate sources. I wish the Computer History Museum would do a oral history museum of him.

https://www.youtube.com/user/ComputerHistory/videos

There are some great computer history videos including oral history interviews but Mitch is not on the list. As good of a chip designer as he is, the demise of the Motorola 88k architecture, limited success of the Ross Technology hyperSPARC (Dr. Roger Ross directed the developments of Motorola's MC68030 and RISC-based 88000 microprocessor families) and the cancellation of the AMD K9 (AMD64/x86-64) CPU he was chief architect of weighs on his legacy. He was also shader core architect for a Samsung GPU but that doesn't bring much exposure. His Linkedin profile does not mention 68k development but he arrived at Motorola in 1983 and the 88k was not released until 1988. Motorola was all about the 68k before the 88k and it likely did not take 5 years to develop the 88k (68000 development was about 3 years).

https://www.linkedin.com/in/mitch-alsup-8691537

|

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 27-Jul-2022 5:16:39

| | [ #51 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 5273

From: Australia | | |

|

| @matthey

Quote:

Think Silicon's GPUs have the potential to disrupt the status quo in the GPU market.

|

Unlikely when handset SoC is dominated by Qualcomm SoC's Adreno and followed by Arm Ltd's Mali.

The ability to play Genshin Impact at good frame rates is handset SoC's "can it run Crysis" moment.

https://youtu.be/wkH7ZcZjwi8?t=98

Pi 4's running Genshin Impact (Unity3D) in a slide show frame rates and render bugs.

Broadcom VideoCore VI is trash.

Quote:

Cell CPU didn't have enough SPEs for a high performance GPU.

|

CELL's SPE lacks fixed graphics hardware features when compared to ATI Xenos. Modern GpGPUs are not DSPs.

ATI Xenos has 48 unified shaders, 192-pixel co-processors, hardware ROPS, hardware tessellation, hardware rasterization, hardware early-Z cull, texture filtering, move engines, hardware texture decompression and etc. Modern GPUs have large SRAM register storage since they are the fastest known data storage tech. SPU's 128 registers are not enough!

PS5's DSP is based on AMD GCN CU with raster hardware removed.

Each Direct3D evolution increases the register storage requirements.

Intel Larrabee has been replaced by Intel Xe and ARC.

Last edited by Hammer on 28-Jul-2022 at 06:24 AM.

Last edited by Hammer on 27-Jul-2022 at 05:36 AM.

_________________

Ryzen 9 7900X, DDR5-6000 64 GB RAM, GeForce RTX 4080 16 GB

Amiga 1200 (Rev 1D1, KS 3.2, PiStorm32lite/RPi 4B 4GB/Emu68)

Amiga 500 (Rev 6A, KS 3.2, PiStorm/RPi 3a/Emu68) |

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 27-Jul-2022 5:33:08

| | [ #52 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 5273

From: Australia | | |

|

| @matthey

Quote:

The increased parallelism of GPU coprocessors is partially offset by the cache coherency inefficiencies, memory copying overhead and increased memory usage which is significant, especially for low power GPUs. There is a reason why HSA is used by consoles, Nvidia has GPUDirect and most GPU designers have memory zero copy technologies.

|

On a PC with dGPU, the Resizable BAR feature enables the CPU to directly access the entire GPU memory when zero-copy is needed for certain workloads.

The downside with pure shared memory design is the CPU vs GPU shared bus contention i.e. GPU's frame buffer I/O shouldn't be disturbed by the CPU I/O access.

CPU should be able to perform its workload without GPU interference.

GPU should be able to perform its workload without CPU interference.

Modern GPUs have a multi-MB L2 cache connected to ROPS and TMU read/write I/O in an attempt to mitigate memory bus contention issues.

Cache coherency is not a major issue when a programmer partitions workload between CPU and GPU in a proper manner.

Learn the lesson from stock Amiga 1200 with Fast Ram vs without Fast Ram.

Current game consoles are designed with a certain lower-cost budget target.

Last edited by Hammer on 28-Jul-2022 at 06:25 AM.

Last edited by Hammer on 27-Jul-2022 at 05:35 AM.

_________________

Ryzen 9 7900X, DDR5-6000 64 GB RAM, GeForce RTX 4080 16 GB

Amiga 1200 (Rev 1D1, KS 3.2, PiStorm32lite/RPi 4B 4GB/Emu68)

Amiga 500 (Rev 6A, KS 3.2, PiStorm/RPi 3a/Emu68) |

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 27-Jul-2022 5:49:35

| | [ #53 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 5273

From: Australia | | |

|

| @Hans

Quote:

Raytracing is a different game, where the random nature of shooting rays through the scene can make it hard to efficiently use the cache and memory bandwidth

|

On modern GPUs with raytracing, bounding volume hierarchy (BVH) accelerated structure is used for mass intersection tests.

Raytracing is a search engine and intersection branch test problem.

Blender 3D raytracing on RTX 3080 Ti is very fast._________________

Ryzen 9 7900X, DDR5-6000 64 GB RAM, GeForce RTX 4080 16 GB

Amiga 1200 (Rev 1D1, KS 3.2, PiStorm32lite/RPi 4B 4GB/Emu68)

Amiga 500 (Rev 6A, KS 3.2, PiStorm/RPi 3a/Emu68) |

| | Status: Offline |

| |  Hans Hans

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 27-Jul-2022 5:51:52

| | [ #54 ] |

| |

|

Elite Member

|

Joined: 27-Dec-2003

Posts: 5067

From: New Zealand | | |

|

| @matthey

Quote:

| There is a night and day difference between a GPU designed for performance and low power. Except for the high end desktop GPU market, most hardware has better CPU+GPU integration and power efficiency rather than brute force GPU hardware parallelism. |

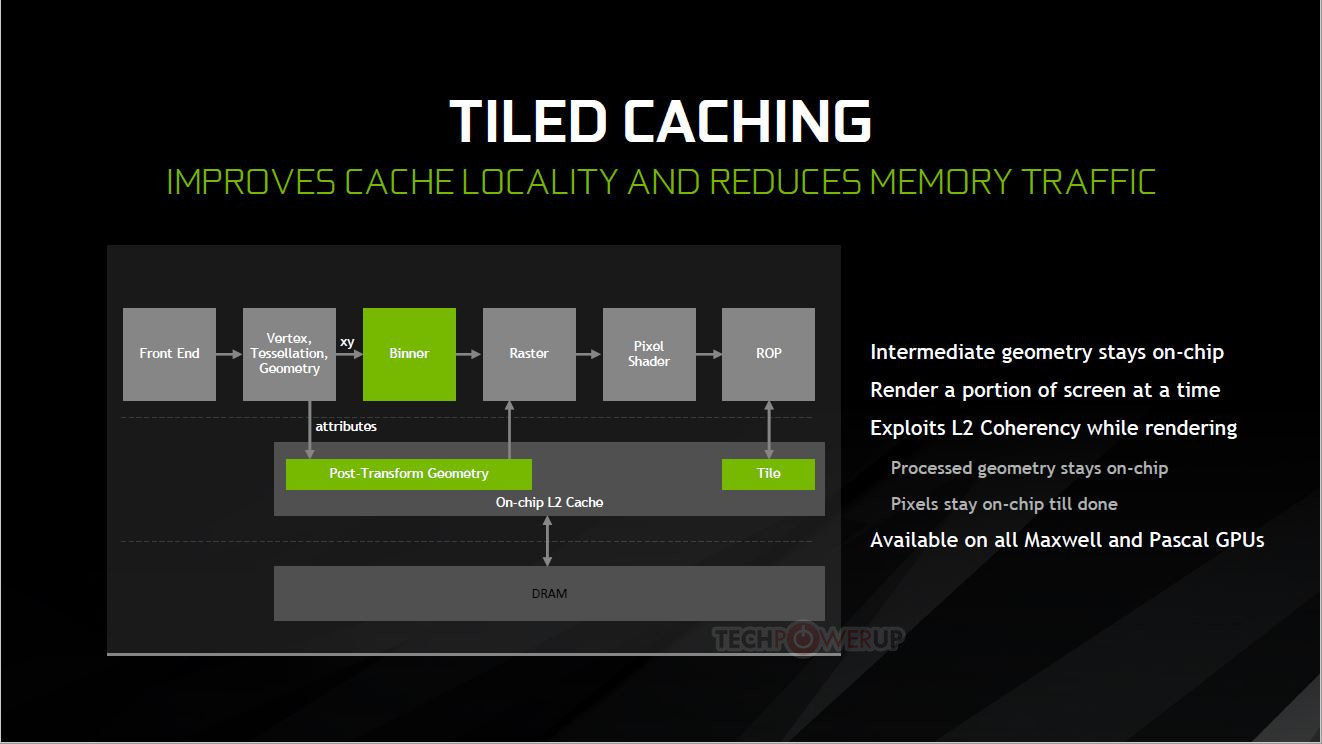

Not sure what you mean. The primary difference between a desktop GPU and a mobile one, is that the mobile GPU renders things in tiles to reduce memory bandwidth usage. Before rasterization, it'll figure out which triangles affect which tile, so that each can be processed separately. This reduces memory bandwidth requirements, at the cost of additional latency (there's an additional step involved).

Samsung have a good article on how it works (link).

Despite the tiling advantages, if a workload needs 100 GOps to run, then it needs 100 GOps to run, and a 1000 GFLOPS GPU will process it faster than a 400 GFLOPS GPU (assuming a different bottleneck didn't get in the way).

Quote:

| The Cell CPU didn't have enough SPEs for a high performance GPU. Detaching SIMD units from the integer CPU cores should have allowed for more of them at the expense of more difficulty in programming. Perhaps Cell and Larrabee did not have specialized enough SIMD "GPU" instructions? |

The Cell CPU doesn't have hardware z-buffering, rasterization, texture fetching, etc. Z-buffering in software is slow even before you start tiling the buffer. GPUs store 2D/3D data in tiled formats so that adjacent pixels are closer together in memory. This is critical to improving cache usage, and is much better performed in hardware.

Quote:

| Perhaps the RV64X vector ISA extension has more GPU specific instructions? Perhaps the RISC-V integer cores are small enough area that enough more cores can be added to be competitive in GPU performance albeit with weakened single core CPU performance? |

Perhaps. I haven't looked at their ISA.

Quote:

| The increased parallelism of GPU coprocessors is partially offset by the cache coherency inefficiencies, memory copying overhead and increased memory usage which is significant, especially for low power GPUs. There is a reason why HSA is used by consoles, Nvidia has GPUDirect and most GPU designers have memory zero copy technologies. |

Not sure what you mean by this. GPUs have had the ability to read data directly from main memory for a very long time using DMA and GART. This is great for streaming data to/from the GPU without needing to copy anything. Warp3D Nova can do this too.**

What's nice about HSA is that the CPU's MMU and the GPU's IOMMU have an identical view of memory, so you don't need to track two separate address spaces. However, the overhead of mapping/unmapping RAM into the GPU's IOMMU is irrelevant if you pre-allocate the buffers, because that way mapping/unmapping doesn't happen during rendering.

Quote:

| SIMD is simple to implement and has a very low cost for integer datatypes. I would expect multithreading would be important for keeping the GPU cores working during long thread stalls. SIMT does sound like a pain to program though. Cache and memory optimization would be difficult for sure. |

SISD is even simpler and smaller.

Quite a bit of logic is needed to implement a hardware multiply, or a full FPU. You can either put 4 of them in a single SIMD, or put 1 each in 4 small cores (with shared instruction and data caches, or the silicon real-estate starts to balloon out again). Which is better depends on the workload.

Hans

** Warp3D Nova is hampered by having to convert data between a big-endian CPU and little-endian GPU. That's always going to take extra time, putting us at a disadvantage.Last edited by Hans on 27-Jul-2022 at 06:01 AM.

Last edited by Hans on 27-Jul-2022 at 05:53 AM.

_________________

http://hdrlab.org.nz/ - Amiga OS 4 projects, programming articles and more. Home of the RadeonHD driver for Amiga OS 4.x project.

https://keasigmadelta.com/ - More of my work. |

| | Status: Offline |

| |  xe54 xe54

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 27-Jul-2022 20:39:45

| | [ #55 ] |

| |

|

Regular Member

|

Joined: 16-Feb-2005

Posts: 122

From: Unknown | | |

|

| @Hammer

Quote:

| FYI, Pi Storm's Pi Zero 2's and Pi 3A+'s ARM Cortex A53 supports both big and little endian formats |

Thanks for the info. I meant it more as that I find it funny that people on this forum are constantly mentioning Endian on ARM, whereas the developers of that project didn't seem to worry about it.

It is a sensational bit of work and I know many on here think it is cheating but for me I think of it as a new co-processor! Pi Storm has the price and feature point that this community needs! |

| | Status: Offline |

| |  xe54 xe54

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 27-Jul-2022 20:46:58

| | [ #56 ] |

| |

|

Regular Member

|

Joined: 16-Feb-2005

Posts: 122

From: Unknown | | |

|

| @Trekiej

Quote:

| Would it be worth adding a special cache to a cpu to hold Emulated Code? |

I think FPGA would satisfy that desire. I linked earlier to dev information about boards that are setup to develop CPUs that link to the soft-cores in the FPGAs. The dev boards are expensive and were designed so that you can complete the design before sending it off to get fabricated at scale but they can be used standalone too. In the future these dev boards will come down in price and would suit the retro market well! |

| | Status: Offline |

| |  xe54 xe54

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 27-Jul-2022 20:59:44

| | [ #57 ] |

| |

|

Regular Member

|

Joined: 16-Feb-2005

Posts: 122

From: Unknown | | |

|

| @Hans @matthey @Hammer

These are all valid points.

RISC-V doesn't have to replace only the CPU and can be friends with ARM too!

I agree that the lack of HAL is an issue for the time being, though I know that solutions exist and are being implemented.

ARM is lovely too! Faster and more available at the moment, a good home for the interim ;)

PI Storm and Vampire are both great but feel a little DIY

Thanks for keeping the debate on topic and in good spirits! |

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 28-Jul-2022 5:43:12

| | [ #58 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 5273

From: Australia | | |

|

| @Hans

Quote:

Not sure what you mean. The primary difference between a desktop GPU and a mobile one, is that the mobile GPU renders things in tiles to reduce memory bandwidth usage. Before rasterization, it'll figure out which triangles affect which tile, so that each can be processed separately. This reduces memory bandwidth requirements, at the cost of additional latency (there's an additional step involved).

|

Semi-modern desktop GPUs such as NVIDIA Maxwell and AMD Vega have tile cache rendering.

PC RDNA 2 discrete GPUs has multi-MB Infinity Cache with DCC (delta color compression everywhere) that can fit the entire framebuffers.

-----

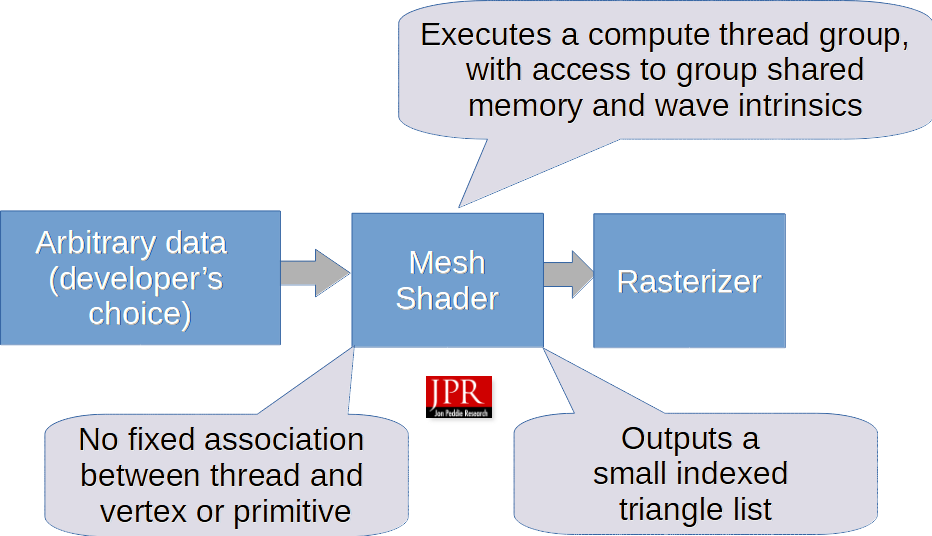

DX12/VK mesh shaders are the compute shader-like method to cull triangles before rasterization(1).

Subpixel geometry rendering is wasteful.

Last edited by Hammer on 28-Jul-2022 at 05:46 AM.

_________________

Ryzen 9 7900X, DDR5-6000 64 GB RAM, GeForce RTX 4080 16 GB

Amiga 1200 (Rev 1D1, KS 3.2, PiStorm32lite/RPi 4B 4GB/Emu68)

Amiga 500 (Rev 6A, KS 3.2, PiStorm/RPi 3a/Emu68) |

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 28-Jul-2022 6:12:33

| | [ #59 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 5273

From: Australia | | |

|

| @xe54

Quote:

xe54 wrote:

@Hammer

Quote:

| FYI, Pi Storm's Pi Zero 2's and Pi 3A+'s ARM Cortex A53 supports both big and little endian formats |

Thanks for the info. I meant it more as that I find it funny that people on this forum are constantly mentioning Endian on ARM, whereas the developers of that project didn't seem to worry about it.

It is a sensational bit of work and I know many on here think it is cheating but for me I think of it as a new co-processor! Pi Storm has the price and feature point that this community needs!

|

PiStorm enables the protection of software and hardware investments while improving performance at a reasonable price when they are available. Pi 3 and Pi 4 are victims of scalpers.

PiStorm+Raspberry Pi+EMU68 is not a co-processor for the Amiga since this product transparently takes over from the main 68K CPU.

The main reason for ARM's existence is due to Commodore MOS/CGS didn't evolve the 65xx CPU like Intel's X86 CPU family! The Commodore factor is a major issue.

In terms of high-level concept, the PiStorm+Raspberry Pi+EMU68 combination is closer to Transmeta VILW CPU that targets the X86 instruction set and both AMD K12 ARMv8 and ZEN share the basic R&D building blocks, hence I don't see ARM-based EMU68 as a major problem.

"Design in Germany" Phase 5 and Vampire are not priced competitive i.e. overpriced BMW/Mercedes Benz mentality doesn't work in the desktop computer market.

Unlike the UK, it's no brainer why Germany doesn't have a mass-produced low-cost desktop computer platform i.e. UK's Raspberry Pi is the 21st century's $99 ZX Spectrum microcomputer.

Last edited by Hammer on 28-Jul-2022 at 06:14 AM.

_________________

Ryzen 9 7900X, DDR5-6000 64 GB RAM, GeForce RTX 4080 16 GB

Amiga 1200 (Rev 1D1, KS 3.2, PiStorm32lite/RPi 4B 4GB/Emu68)

Amiga 500 (Rev 6A, KS 3.2, PiStorm/RPi 3a/Emu68) |

| | Status: Offline |

| |  Hans Hans

|  |

Re: RISC V Laptop announced... Could this be the ultimate AMIGA hardware?

Posted on 28-Jul-2022 6:52:24

| | [ #60 ] |

| |

|

Elite Member

|

Joined: 27-Dec-2003

Posts: 5067

From: New Zealand | | |

|

| @Hammer

Yes, the lines between mobile and desktop designs are being inevitably blurred...

@xe54

Quote:

I agree that the lack of HAL is an issue for the time being, though I know that solutions exist and are being implemented. |

What HAL are you talking about? ExecSG has a Hardware Abstraction Layer (HAL), needed in part because of low-level variations between PowerPC chips (e.g., certain config registers). So, that's not a barrier to getting the kernel working on ARM.

Getting existing software to work is a different matter, because it's all PowerPC and 68K.

While ARM is officially bi-endian, I think we'd be better off making the switch to little-endian. Little-endian won, and it's time to accept it.

Hans

Last edited by Hans on 28-Jul-2022 at 06:53 AM.

_________________

http://hdrlab.org.nz/ - Amiga OS 4 projects, programming articles and more. Home of the RadeonHD driver for Amiga OS 4.x project.

https://keasigmadelta.com/ - More of my work. |

| | Status: Offline |

| |

|

|

|

[ home ][ about us ][ privacy ]

[ forums ][ classifieds ]

[ links ][ news archive ]

[ link to us ][ user account ]

|