Your support is needed and is appreciated as Amigaworld.net is primarily dependent upon the support of its users.

|

|

|

22 crawler(s) on-line. 22 crawler(s) on-line.

95 guest(s) on-line. 95 guest(s) on-line.

0 member(s) on-line. 0 member(s) on-line.

You are an anonymous user.

Register Now! |

|

|

|

| Poster | Thread |  matthey matthey

|  |

CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 16-Aug-2025 6:28:39

| | [ #1 ] |

| |

|

Elite Member

|

Joined: 14-Mar-2007

Posts: 2840

From: Kansas | | |

|

| Hammer Quote:

That's not a real situation. High clock speed is a designed feature.

|

There are multiple factors which affect CPU clock speeds. I will start off with an Apple presentation video from 2001.

Megahertz Myth

https://www.youtube.com/watch?v=TJN5GfZuVog

The video explains how a PPC G4 can perform work faster than a Pentium 4 at approximately twice the clock speed. The 7-stage PPC G4 reached 867 MHz while the 20-stage Pentium 4 reached 1,800 MHz using a similar process. The longer instruction pipeline is the most important factor in how high an instruction pipeline can clock but a very deep pipeline has disadvantages like the video states but it is deceptive. The Pentium 4 should be able to feed an instruction into the pipeline in half the time of the G4 CPU as the clock cycle time can be shorter due to more pipeline stages and a higher clock speed. This makes the contest must closer. Also, the pipeline only needs to be flushed for branches on a miss predicted branch which is only about 5-10% of branches. The 7-stage PPC pipeline was actually new and late for PPC. Even the original G4 only had 4-stages like most earlier PPC designs (PPC601, PPC603 and most G3 designs). The shallow pipeline PPC designs had allowed simpler branch prediction which had to be improved with the 7-stage pipeline. The Pentium 4 had relatively good branch prediction but branch penalties were a major reason why Intel eventually returned to a more practical and shallower pipelined P6/Pentium III like design which is still used today. There were other factors why the G4 did not achieve higher clock speeds. Motorola/Freescale was less aggressive with most of their CPU designs which included 68k CPUs. Most of their designs were fully static logic designs which is easier for development and reduces power (allows clock speeds from 0 to max frequency rating for core sleep to save more power). The competition was more likely to use dynamic logic to gain a max clock speed advantage.

WDC 65C02S - fully static CMOS design with 'C' standing for CMOS and 'S' standing for static

P5 Pentium - used dynamic logic

ARM2 - used dynamic logic

MC68040V - fully static CMOS design

MC68060 - fully static CMOS design

MC68SEC000 - fully static CMOS design

R3000 - static CMOS design

R4000 - used dynamic logic

Pentium 4 - used dynamic logic

https://en.wikipedia.org/wiki/Dynamic_logic_(digital_electronics)

https://en.wikipedia.org/wiki/Static_core

Low power and variable clock frequency support with ultra low power sleep is more important for the embedded market. Other markets may sacrifice low power and easier development for higher clock speeds. The CPU voltage is another factor in attainable clock speeds. Higher clock speeds increase the speed of electricity through the logic while smaller chip fab processes reduce the distance the electricity has to travel allowing for lower voltages to reduce power. This can be seen with the WDC 65C02S in Table 1 of the following Microprocessor Report.

WDC Carries 6502 Flag into New Arenas

https://www.cecs.uci.edu/~papers/mpr/MPR/ARTICLES/080903.pdf

The WDC 65C02S supports a voltage from 1.0-6.0V. The max clock speed at 5V is 16 MHz drawing 80mW of power while at 3.3V the max clock speed is reduced to 10MHz but only draws 33mW of power. The fully static design provides flexibility to choose lower power or higher clock speeds. The success allowed 100 million CPUs to be sold already by 1994 with 25 million more shipped every year. This was low power despite microcode and a crappy but improved 6502 family ISA. Newer chip fab processes can allow for lower voltage and lower power but using a similar process, it is difficult to beat because the power draw is based on the number of active transistors and the 6502 CPU logic is about as simple as possible for an 8-bit CPU.

Last edited by matthey on 16-Aug-2025 at 06:34 AM.

Last edited by matthey on 16-Aug-2025 at 06:32 AM.

|

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 18-Aug-2025 2:55:47

| | [ #2 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 6704

From: Australia | | |

|

| @matthey

Quote:

| The video explains how a PPC G4 can perform work faster than a Pentium 4 at approximately twice the clock speed |

Intel Pentium IV's SIMD hardware is only 64-bit wide i.e. 64-bit FADD SSE and 64-bit FMUL SSE. R&D resource was focused on reaching a very high clock speed.

Intel Pentium IV has other bottlenecks such as a single x86 decoder, which is mitigated by trace cache. Intel Pentium IV is a marketing-driven R&D, with high clock speed being king.

Apple's PPC G4 marketing focused on 128-bit SIMD workloads. It's a good thing Altivec wasn't a guaranteed standard on the PPC camp when IBM Power 5 and PPC 750 series didn't come with Altivec. NXP/Freescale still sells Altivec-less PPC e5500. LOL

Apple's PPC G4 didn't reach production volumes that would affect the X86 camp. IBM PPC 970 (Apple's G5) supported Altivec.

AMD K8 was the 1st x86 CPU with a 128-bit FADD SSE unit, but with a 64-bit FMUL SSE unit.

Intel Core 2 was the 1st x86 CPU with full 128-bit SIMD units i.e. 128-bit FADD and 128-bit FMUL pipelines.

AMD K10 has a full 128-bit SIMD unit, but one missing x86 decoder from Intel Core 2's standard. AMD followed high clock speed marketing with Bulldozer.

Both AMD K5 and Intel Pentium P5 have the same five pipeline length, with different reachable clock speed outcomes.

AMD K5-166 reached 116.7 MHz in January 13, 1997.

AMD K5-200 reached 133 MHz in 1997. Reached a clock speed wall vs mass production yields. The Intel Pentium 200 MHz processor was released in June 1996.

AMD K5 used 500 nm and 350 nm process nodes.

https://www.dosdays.co.uk/media/amd/AMD-K5_Processor_Data_Sheet_(January_1997).pdf

Four-issue superscalar core with six parallel execution units arranged in a five-stage pipeline

https://en.wikichip.org/wiki/amd/microarchitectures/k6

AMD K6's pipeline length ranges from 6 to 7 stages deep.

68060 rev1 with 600 nm process node didn't match Intel's P54C 600 nm process node on clock speed yield vs production volume.

How many 68060 rev1 should I buy to debunk your fiction?

For AMD K7 Athlon vs Intel Pentium III

https://www.tomshardware.com/reviews/athlon-processor,121-6.html

The integer pipeline of Athlon is 10 stages long, which is considered as almost ideal length for clock speeds of 500 - 1000 MHz. Pentium III's integer pipeline is 12 to 17 stages long and thus more sensitive to wrongly predicted branches. As you can see from the picture above, the floating point pipeline of Athlon is 15 stages long, standing against an estimation of over 25 stages in Pentium III.

K7 Athlon integer pipeline depth = 10,

K7 Athlon floating pipeline depth = 15,

Pentium III integer pipeline depth = 12 to 17,

Pentium III floating pipeline depth = 25,

K7 Athlon beats Pentium III in the GHz race. The design's quality matters for reaching high clock speed, not just some pipeline stage count bullet point on a PowerPoint slide.

Granite Ridge Zen 5 vs Strix Point/Turin Zen 5C have different attainable clock speed ranges despite the fact that they both have the same pipeline stage count.

Granite Ridge Zen 5 is designed for a higher clock speed when compared to the higher-density Zen 5C. The layout is different on Granite Ridge Zen 5 vs Strix Point/Turin Zen 5C.

SiFive P550 processor features a 13-stage pipeline and a clock speed of 2.4 GHz when implemented in a 7nm process.

X86-64's 7 nm process implementation exceeds 2.4 GHz.

Last edited by Hammer on 18-Aug-2025 at 03:58 AM.

Last edited by Hammer on 18-Aug-2025 at 03:44 AM.

Last edited by Hammer on 18-Aug-2025 at 03:38 AM.

Last edited by Hammer on 18-Aug-2025 at 03:36 AM.

Last edited by Hammer on 18-Aug-2025 at 03:24 AM.

Last edited by Hammer on 18-Aug-2025 at 03:20 AM.

Last edited by Hammer on 18-Aug-2025 at 03:01 AM.

_________________

|

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 18-Aug-2025 4:17:25

| | [ #3 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 6704

From: Australia | | |

|

| @matthey

Quote:

WDC Carries 6502 Flag into New Arenas

https://www.cecs.uci.edu/~papers/mpr/MPR/ARTICLES/080903.pdf

The WDC 65C02S supports a voltage from 1.0-6.0V. The max clock speed at 5V is 16 MHz drawing 80mW of power while at 3.3V the max clock speed is reduced to 10MHz but only draws 33mW of power. The fully static design provides flexibility to choose lower power or higher clock speeds. The success allowed 100 million CPUs to be sold already by 1994 with 25 million more shipped every year. This was low power despite microcode and a crappy but improved 6502 family ISA. Newer chip fab processes can allow for lower voltage and lower power but using a similar process, it is difficult to beat because the power draw is based on the number of active transistors and the 6502 CPU logic is about as simple as possible for an 8-bit CPU. |

WDC efforts targeted the MPU target market, which is far from C64's "kickass" multimedia desktop computer for the money approach.

The RISC-based ARC and ARM were born from the inadequate WDC 65816 and MOS/CSG 65xx CPU technology, respectively.

Like many others, I didn't buy into Amiga being a pure MPU play. I bought into the Amiga as a "kickass" multimedia gaming desktop computer for the money. The Amiga was a value-for-money gaming desktop personal computer.

Amiga's baseline 68000 CPU selection kept track with other mainstream game consoles, such as Sega Genesis/Mega Drive's 68000 selection. It's a poorman's 386SX-16 with half the clock speed.

The performance value market moved towards the poorman's 68LC040 i.e. the MIPS R304x/R05x.

Commodore rejected Motorola 88000 as being too expensive for Amiga Homber, which targeted the Amiga CD3D use case.

Offer a solution for a competitive CPU+GPU solution up to $50 BOM cost with the early 1991 to 1994 time period's US inflation.

_________________

|

| | Status: Offline |

| |  matthey matthey

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 19-Aug-2025 5:06:18

| | [ #4 ] |

| |

|

Elite Member

|

Joined: 14-Mar-2007

Posts: 2840

From: Kansas | | |

|

| Hammer Quote:

Both AMD K5 and Intel Pentium P5 have the same five pipeline length, with different reachable clock speed outcomes.

|

The K5 is OoO, more complex and uses more transistors. The length of the longest logic path through each stage is the critical path for timing while performing more logic in parallel on each stage should be possible but has other disadvantages. Generally, less logic is beneficial and it is not unusual for simpler in-order CPU cores to clock higher than more complex OoO cores. Likewise, scalar CPU cores are simpler than superscalar CPU cores and have the potential to reach higher clock speeds. The pipeline stages need to be analyzed and balanced as the slowest stage determines the length of the clock cycle. Optimizing the slowest stage can allow the clock cycle to be shorter and the max clock speed to be increased. Development skill and time are required to improve the max clock speed and further improvements become more and more difficult. There are differences and variations in chip fab processes and adjustments that can be made to improve max clock speeds and chip yields even using a similar sized process.

Hammer Quote:

68060 rev1 with 600 nm process node didn't match Intel's P54C 600 nm process node on clock speed yield vs production volume.

|

The P5 Pentium had over 200 engineers at its peak and obviously had a well funded R&D budget.

https://en.wikipedia.org/wiki/Pentium_(original)#Development Quote:

Development

The P5 microarchitecture was designed by the same Santa Clara team which designed the 386 and 486. Design work started in 1989;â the team decided to use a superscalar RISC architecture which would be a convergence of RISC and CISC technology, with on-chip cache, floating-point, and branch prediction. The preliminary design was first successfully simulated in 1990, followed by the laying-out of the design. By this time, the team had several dozen engineers. It took some 100 million clock cycles of pre-silicon verification test which includes major operating systems and many application were booted and running. They had to use the Quickturn Systems Inc. software to run pre-silicon simulation program which was 30,000 times quicker than the previous technique method available. The design was taped out, or transferred to silicon, in April 1992, at which point beta-testing began. By mid-1992, the P5 team had 200 engineers. Intel at first planned to demonstrate the P5 in June 1992 at the trade show PC Expo, and to formally announce the processor in September 1992, but design problems forced the demo to be cancelled, and the official introduction of the chip was delayed until the spring of 1993. The first computer systems featuring the Pentium appeared in the summer of 1993, the first being Advanced Logic Research and their Evolution V workstation, released in the first week of July 1993.

|

The 68060 had a small team (12 engineers?) and likely had engineers pulled off the project so the announced full 68060@66MHz with MMU and FPU was never released. The development for the LC and EC versions for embedded use that removed the MMU and FPU received development funding though. Priorities obviously changed from 68060 development to PPC development.

Hammer Quote:

WDC efforts targeted the MPU target market, which is far from C64's "kickass" multimedia desktop computer for the money approach.

The RISC-based ARC and ARM were born from the inadequate WDC 65816 and MOS/CSG 65xx CPU technology, respectively.

|

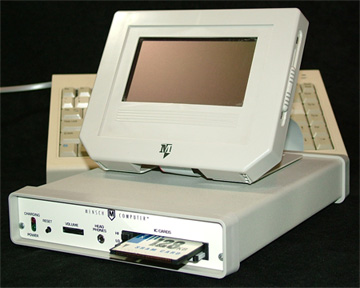

WDC produced the Mensch computer.

https://www.westerndesigncenter.com/wdc/Mensch_Computer.php

The 6502 family is the wrong CPU family if wanting to make a '"kickass" multimedia desktop computer'.

Hammer Quote:

Like many others, I didn't buy into Amiga being a pure MPU play. I bought into the Amiga as a "kickass" multimedia gaming desktop computer for the money. The Amiga was a value-for-money gaming desktop personal computer.

|

Unfortunately, Commodore failed to adapt the 68k Amiga from a "kickass" multimedia desktop computer to a "kickass" multimedia hobby, embedded and educational computer like the RPi. All they had to do was keep integrating, cost reducing and improving the value like Jay Miner showed with his actions and talked about in his speeches. Former A600 owner and RPi CEO Eben Upton understands the philosophy while Trevor "Dinosaur" Dickinson has been trying to stick a square peg through a round hole for over a decade.

Hammer Quote:

Amiga's baseline 68000 CPU selection kept track with other mainstream game consoles, such as Sega Genesis/Mega Drive's 68000 selection. It's a poorman's 386SX-16 with half the clock speed.

The performance value market moved towards the poorman's 68LC040 i.e. the MIPS R304x/R05x.

|

You skipped over the 68EC020/68EC030 which were affordable and could have been standard earlier than the A1200. The slow development of the Amiga chipset was more of an upgrade problem than the CPU for Commodore. A 68EC030@28MHz in the A1200 and CD32 with a full CMOS AA+ chipset would have been much more competitive as the CPU and chipset would have both doubled performance and had synergies together. The 68k SoC perhaps could have doubled the performance of each again, perhaps more if the caches were increased. The $100 USD price savings from integration should have allowed the 68k to compete with cheap MIPS trash too. Sure, the 68020/68030 has to be clocked up too much for performance and the 68040 was not competitive due to arriving so late but the 68060 was available at the end of the tunnel which Commodore could have used for decades with incremental upgrades. They had to make it through the tunnel first by upgrading the Amiga chipset but they were more experienced at sabotaging it.

Last edited by matthey on 19-Aug-2025 at 05:12 AM.

|

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 20-Aug-2025 1:39:49

| | [ #5 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 6704

From: Australia | | |

|

| @matthey

Quote:

The K5 is OoO, more complex and uses more transistors. The length of the longest logic path through each stage is the critical path for timing while performing more logic in parallel on each stage should be possible but has other disadvantages. Generally, less logic is beneficial and it is not unusual for simpler in-order CPU cores to clock higher than more complex OoO cores. Likewise, scalar CPU cores are simpler than superscalar CPU cores and have the potential to reach higher clock speeds.

|

FYI, non-PR K5 (SSA/5) SKUs used a 500 nm process node, and the clock speed reached 100 Mhz.

K5 (Model 1) with PR SKUs used a 350 nm process node and the clock speed reached 100 Mhz.

K5 (Model 2) with PR SKUs used a 350 nm process node and the clock speed reached 116.7 Mhz.

K5 (Model 3) with PR SKUs used a 350 nm process node and the clock speed reached 133 Mhz.

A process node switch from 500 nm to 350 nm and three-stepping improvements with subpar clock speed uplift result, K5 runs into the clock speed scaling wall.

Both 133 MHz K5-PR200 (Model 3) and K6 (Model 6, NexGen Nx686 core) used the same 350 nm process node. K6 (Model 6) reached 233 MHz before switching to K6 (Model 7)'s 250 nm process node, which reached 300 MHz.

With the NexGen Nx686 core, K6 has a different engineering team with different clock speed results. K6 has a 6-stage pipeline.

68060 rev 6's 450 nm process node is between 500 and 350 nm process nodes. 68060's process node switch from 600 nm to 450 nm.

Quote:

The pipeline stages need to be analyzed and balanced as the slowest stage determines the length of the clock cycle. Optimizing the slowest stage can allow the clock cycle to be shorter and the max clock speed to be increased. Development skill and time are required to improve the max clock speed and further improvements become more and more difficult. There are differences and variations in chip fab processes and adjustments that can be made to improve max clock speeds and chip yields even using a similar sized process.

|

Coldfire V4 ditched a significant 68K hardware sections to reach a higher clock speed. Coldfire V4 used 180 nm and 130 nm (?) process nodes. Coldfire V4's clock speed reached around 266 MHz.

Coldfire V5's clock speed reached around 333 MHz to 366 MHz with a 130 nm process node.

For HP printers, ColdFire V5x clock speed reached around 540 MHz.

--------------

From the "second source" x86 AMD:

Mobile K6-2+ (Model 13, 3DNow), with 180 nm process node has reached 570 Mhz.

Mobile K6-III+ (Model 13, Enhanced 3DNow) with 180 nm process node has reached 500 Mhz.

Quake-related FPU improvements caused clock speed degradation.

K7 Athlon XP "Barton" (Model 10) with 130 nm process node has reached 2333 Mhz.

For mobile, Athlon XP-M "Barton" with 130 nm process node;

Mainstream SKUs reached 2133 Mhz

Desktop replacement SKUs reached 2200 Mhz

Low voltage SKUs reached 1867 Mhz

K8 Athlon 64 FX "ClawHammer" (CG steppings) with 130 nm process node has reached 2600 Mhz.

For mobile,

Mobile K8 Athlon 64 "ClawHammer" with 130 nm process node;

Mainstream SKUs reached 2400 MHz,

Standard power SKUs reached 2200 MHz,

Low power SKUs reached 1600 MHz.

Mobile K8 Athlon 64 "Odessa" with 130 nm process node;

Desktop replacement SKUs reached 1800 Mhz.

Low power SKUs reached 2000 Mhz.

-------------------

Motorola PowerPC 7447 and 7457 used a 130 nm process node, but they were late on the time to market. These CPU core was reused for Freescale PPC e600 (90 nm process node).

------------------

Another factor in the game console market:

K7 Duron 7x0 MHz range was offered for the original Xbox game console before Bill Gates' intervention and imposed Intel Coppermine 733 Mhz insert.

Removing Bill Gates from MS removes the personal link with Intel's Andy Grove.

https://www.gamespot.com/articles/20-years-later-xbox-creator-apologizes-to-amd-ceo-for-last-minute-switch-to-intel-pure-politics/1100-6496996/

Microsoft dropped AMD in favor of Intel due to "pure politics," Blackley said in another tweet.

Seamus Blackley designed the original Xbox.

AMD offered an Xbox CPU for around $20. The original Xbox CPU is based on K7 (Duron) and the nForce chipset (licensed AMD 760 northbridge with NVIDIA NV2A IGP). The nForce chipset was modified for the Intel Coppermine FSB bus._________________

|

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 20-Aug-2025 1:42:10

| | [ #6 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 6704

From: Australia | | |

|

| @matthey

Quote:

You skipped over the 68EC020/68EC030 which were affordable and could have been standard earlier than the A1200. The slow development of the Amiga chipset was more of an upgrade problem than the CPU for Commodore. A 68EC030@28MHz in the A1200 and CD32 with a full CMOS AA+ chipset would have been much more competitive as the CPU and chipset would have both doubled performance and had synergies together. The 68k SoC perhaps could have doubled the performance of each again, perhaps more if the caches were increased. The $100 USD price savings from integration should have allowed the 68k to compete with cheap MIPS trash too. Sure, the 68020/68030 has to be clocked up too much for performance and the 68040 was not competitive due to arriving so late but the 68060 was available at the end of the tunnel which Commodore could have used for decades with incremental upgrades. They had to make it through the tunnel first by upgrading the Amiga chipset but they were more experienced at sabotaging it.

|

For 3D workloads, MIPS R30x0 beats heavy microcoded 68EC020 and 68EC030.

https://www.electronicproducts.com/mips-processors-to-push-performance-and-price/

From 1992, IDT MIPS R3040 @ 20 MHz has $15 price, which is 68LC040 near 1 IPC class with a budget price.

PlayStation 1's LSI Logic R3051 selection is a no-brainer.

There's a reason why Sega rejected 68030 from Saturn for SuperH2 @ 28MHz

68EC020's ADD instruction is 2 (from L1 cache) to 3 clock cycles i.e. 0.5 to 0.3 IPC for the ADD instruction.

PS1's LSI Coreware modified MIPS R3051 has 4KB L1 instruction cache and 1KB data scratchpad, which is more than 68EC020's 256-byte L1 instruction cache, and 68EC030's 256 byte L1 instruction cache and 256 byte L1 data cache.

Even factoring in the code density issue with MIPS R3051's 4KB L1 instruction cache being 2KB instruction cache effective, it's still more than 68EC030's 256-byte L1 instruction cache.

https://archive.computerhistory.org/resources/access/text/2013/04/102723262-05-01-acc.pdf

DataQuest 1991, page 119 of 981, volume from 1000 to 5000

For 1992,

68EC020-16, $16.06

68EC020-25, $19.99

68020-25, $35.13

68EC030-25, $35.94 (missing MMU, not Unix capable, used in A4000/030)

68030-25, $108.75

68040-25, $418.52

68EC040-25, $112.50 (missing MMU and FPU, Commodore management rejected three custom chips for Amiga).

Commodore was able to obtain 68EC020-16 for $8 per unit, down from $16.06, which is about $8 discount.

Applying a $8 discount on 68EC030-25's $35.94 would land on $27.94.

Applying a 50% discount on 68EC030-25's $35.94 would land on $17.97.

I didn't skip over the 68EC020 and 68EC030 when I factored in the performance vs price and absolute item cost.

During 1991, for the Christmas 1992 release, Commodore engineers were able to spec 68EC040-25 with three custom chips for $120. This is before adding AGA or AAA multimedia chipsets. Commodore management rejected this path for the Amiga. 68EC040-25 would need a 68040-class modern cache coherence chipset.

A1000plus Mk2 for Xmas 1992 with 68EC040-25 would be an embarrassment for Commodore PCs with 486SX CPUs.

Due to A600's mass production debacle, each A1200 sold has $50 allocated to pay back A600's production-related debt. This $50 and $8 68EC020-16 allocation can be something else. You got $58 to maneuver. What's your spec offer?

---------------------------

Commodore was able to obtain 68EC020-16 for a near price of 68000-16 in November 1990.

Minus AA Lisa, Commodore had most of the A1000plus/A1200's components with established BOM cost in November 1990.

If AA Lisa were available earlier, wedge A1200 was possible around November 1990!

Minus AA Lisa, the wedge A1200 and pizzabox A1000plus had 32-bit guts of A3000.

During November 1990,

The wedge A500plus BOM cost $176,

The pizza box AA1000plus BOM cost $350.42 with two Zorro II slots and 68000-16 @ 14 MHz.

The pizza box AA1000plus BOM cost $351.96 with a single Zorro II slot and 68EC020-16 @ 14 MHz.

The pizza box AA1000plus BOM cost $371.96 with two Zorro II slots and 68EC020-16 @ 14 MHz.

AA1000plus has an IDE controller. The Bridgette chip is in development for the A1000 Plus.

1991 DSP3210 (50MHz in A3000+) cost is $30.

1991 68030-25 QFP cost is $119.84. Premium price for MMU i.e., jumping on the premium Unix cost bandwagon.

From 1994 Commodore_Post_Bankruptcy.pdf, A1200's BOM cost is $226.

Last edited by Hammer on 20-Aug-2025 at 03:02 AM.

Last edited by Hammer on 20-Aug-2025 at 02:55 AM.

Last edited by Hammer on 20-Aug-2025 at 02:35 AM.

Last edited by Hammer on 20-Aug-2025 at 02:26 AM.

Last edited by Hammer on 20-Aug-2025 at 02:24 AM.

Last edited by Hammer on 20-Aug-2025 at 02:18 AM.

Last edited by Hammer on 20-Aug-2025 at 02:17 AM.

Last edited by Hammer on 20-Aug-2025 at 02:12 AM.

Last edited by Hammer on 20-Aug-2025 at 02:01 AM.

Last edited by Hammer on 20-Aug-2025 at 01:45 AM.

_________________

|

| | Status: Offline |

| |  bhabbott bhabbott

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 21-Aug-2025 5:43:04

| | [ #7 ] |

| |

|

Cult Member

|

Joined: 6-Jun-2018

Posts: 585

From: Aotearoa | | |

|

| @Hammer

Quote:

Hammer wrote:

A1000plus Mk2 for Xmas 1992 with 68EC040-25 would be an embarrassment for Commodore PCs with 486SX CPUs. |

No, it would be irrelevant.

Commodore made at least two different 486SX-25 PCs. One had similar form factor and appearance to the A4000. It had a DTK motherboard with on-board VGA and riser board for horizontal cards. The other one had a taller case with a 'standard' ISA bus motherboard and VGA card. The only part Commodore made was the case, so they were equivalent to any other clone PC of the day.

If you wanted a 486 PC then either of these machines would do the job. An Amiga wouldn't, no matter what 68k CPU it had. It wouldn't run Microsoft Windows, or Word or Excel, or MYOB or QuickBooks, or even Wolfenstein 3D. It would be completely useless except for running Amiga software.

Considering that serious handicap, this EC040 Amiga had better be cheap. The A1200 was cheap, but your 'A1000plus mk2' wouldn't be. It would need on-board FastRAM like the A4000 had. It would also need a built-in power supply and external keyboard, and at least 2 slots to make it worthwhile. This would push the retail price up to well over $1000. I would love to have seen a machine like that come out, but it wasn't going to take the world by storm.

|

| | Status: Offline |

| |  matthey matthey

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 21-Aug-2025 8:12:37

| | [ #8 ] |

| |

|

Elite Member

|

Joined: 14-Mar-2007

Posts: 2840

From: Kansas | | |

|

| Hammer Quote:

68060 rev 6's 450 nm process node is between 500 and 350 nm process nodes. 68060's process node switch from 600 nm to 450 nm.

|

The 68060 die shrinks were likely the minimum rework to move to a new process node. A hint is that there is no record of changes to the transistor count. Intel even performed one of these minimal rework die shrinks once with the P54CQS in 1995.

https://en.wikipedia.org/wiki/Pentium_(original)#P54CQS Quote:

The P54C was followed by the P54CQS in early 1995, which operated at 120 MHz. It was fabricated in a 350 nm BiCMOS process and was the first commercial microprocessor to be fabricated in a 350 nm process. Its transistor count is identical to the P54C and, despite the newer process, it had an identical die area as well. The chip was connected to the package using wire bonding, which only allows connections along the edges of the chip. A smaller chip would have required a redesign of the package, as there is a limit on the length of the wires and the edges of the chip would be further away from the pads on the package. The solution was to keep the chip the same size, retain the existing pad-ring, and only reduce the size of the Pentium's logic circuitry to enable it to achieve higher clock frequencies.

|

The P54CQS clock speed gain and power reduction was modest much like for 68060 die shrinks.

Pentium Is First CPU to Reach 0.35 Micron (March 27, 1995 Microprocessor Report)

https://websrv.cecs.uci.edu/~papers/mpr/MPR/ARTICLES/090402.pdf Quote:

The 120-MHz Pentium is rated at 140 SPECint92, a 15% increase over the previous high end, the 100-MHz part. Its external bus runs at 60 MHz, one-half of the CPU speed. The new device is otherwise functionally identical to the 0.5-micron P54C processor. At 120 MHz, its maximum power dissipation is 10 W, about the same as that of a 100-MHz P54C; the more advanced process helps keep the power consumption down despite the higher clock frequency.

...

Initial P54CQS Is Pad-Limited

To bring the 120-MHz part to market as quickly as possible, Intel is using an unusual-looking die that, as Figure 1 shows, combines the P54C pad ring with the core circuitry optically reduced to 0.35-micron rules. Thus, the die size of this version is 163 mm^2, the same as that of the P54C. By retaining the old pad ring, Intel can continue to use the same packaging and wire-bonding machines it uses for the current parts. The smaller transistors run faster, however, allowing the higher clock speed. Furthermore, the effective area (see 071004.PDF) of the die is much less than that of the P54C, improving yield. Thus, despite the increased wafer cost of the 0.35-micron process, the âCQS parts will carry about the same manufacturing cost as the P54C, according to the MDR Cost Model.

The âCQS is based on the C2 stepping of the P54C, so there are relatively few known bugs. One problem with the 120-MHz part, however, prevents operation in dual-processor mode with a shared cache. The optical shrink caused timing changes that do not allow signals to propagate properly between the processors. Multiprocessor systems that do not use a shared cache will work correctly. Intel plans to fix this problem in a future version.

Over time, Intel plans to deploy a redesigned âCQS die that takes full advantage of the 0.35-micron design rules and eliminates the empty area inside the pad ring. This new design, code-named P54CS, will use a smaller pad pitch to reduce the size of the pad ring; this new pitch requires some modifications to the existing wire-bonding machines.

The die size of the P54CS will be about 90 mm^2 , nearly as small as a 486DX2 or DX4. At this size, the number of good die per wafer (according to the MDR Cost Model) will increase from about 65 for the P54C to roughly 130 for the P54CS, potentially doubling Intelâs capacity for Pentium chips. Of course, the full effect of this change will not be seen until Intel implements sufficient capacity in the new process, which will take a year or more; currently, the 0.35-micron process is available only in Intelâs Aloha (Oregon) facility.

|

This minimal effort die shrink is called an optical shrink. Chip yields are improved which is likely the motivation for the 68060 die shrinks considering increasing the max clock ratings was clearly not the motivation. From the article, Motorola was the furthest behind in fab technology of the CPU producers mentioned.

Hammer Quote:

Coldfire V4 ditched a significant 68K hardware sections to reach a higher clock speed. Coldfire V4 used 180 nm and 130 nm (?) process nodes. Coldfire V4's clock speed reached around 266 MHz.

Coldfire V5's clock speed reached around 333 MHz to 366 MHz with a 130 nm process node.

For HP printers, ColdFire V5x clock speed reached around 540 MHz.

|

ColdFire reduced powerful 68k cores into ColdFire to be smaller and cheaper like RISC cores for the embedded market and to avoid competing with fat PPC ISA embedded cores that could not be scaled down as far.

Coldfire compatible FPGA core with ISA enhancement - Brainstorming

https://community.nxp.com/t5/ColdFire-68K-Microcontrollers/Coldfire-compatible-FPGA-core-with-ISA-enhancement-Brainstorming/td-p/238714?profile.language=en GunnarVB Quote:

Hello,

I work as chip developer.

While creating a super scalar Coldfire ISA-C compatible FPGA Core implementation,

I've noticed some possible "Enhancements" of the ISA.

I would like to hear your feedback about their usefulness in your opinion.

Many thanks in advance.

1) Support for BYTE and WORD instructions.

I've noticed that re-adding the support for the Byte and Word modes to the Coldfire comes relative cheap.

The cost in the FPGA for having "Byte, Word, Longword" for arithmetic and logic instructions like

ADD, SUB, CMP, OR, AND, EOR, ADDI, SUBI, CMPI, ORI, ANDI, EORI - showed up to be neglect-able.

Both the FPGA size increase as also the impact on the clockrate was insignificant.

2) Support for more/all EA-Modes in all instructions

In the current Coldfire ISA the instruction length is limited to 6 Byte, therefore some instructions have EA mode limitations.

E.g the EA-Modes available in the immediate instruction are limited.

That currently instructions can either by 2,4, or 6 Byte length - reduces the complexity of the Instruction Fetch Buffer logic.

The complexity of this unit increases as more options the CPU supports - therefore not supporting a range from 2 to over 20 like 68K - does reduce chip complicity.

Nevertheless in my tests it showed that adding support for 8 Byte encoding came for relative low cost.

With support of 8 Byte instruction length, the FPU instruction now can use all normal EA-modes - which makes them a lot more versatile.

MOVE instruction become a lot more versatile and also the Immediate Instruction could also now operate on memory in a lot more flexible ways.

While with 10 Byte instructions length support - there are then no EA mode limitations from the users perspective - in our core it showed that 10 byte support start to impact clockrate - with 10 Byte support enabled we did not reach anymore the 200 MHz clockrate in Cyclone FPGA that the core reached before.

I'm interested in your opinion of the usefulness of having Byte/Word support of Arithmetic and logic operations for the Coldfire.

Do you think that re-adding them would improve code density or the possibility to operate with byte data?

I would also like to know if you think that re-enabling the EA-modes in all instruction would improve the versatility of the Core.

Many thanks in advance.

Gunnar

|

ColdFire had already added back a large portion of 68k support and Gunnar suggested the cost was low to add back more.

He suggests that the max clock speed differences between ColdFire and 68k cores are not only minimal but that the 68060 6 bytes/cycle instruction superscalar execution limitation could easily be increased to 8 bytes/cycle significantly increasing the already very good 68060 multi-issue rate. This is based on timing in a FPGA which is more challenging than for an ASIC. Gunnar's data suggests that it was never worthwhile to drop 68k compatibility for ColdFire and the loss of compatibility and downgrade of features, performance and code density were major reason why Motorola lost the market to ARM with Thumb-2 which was upgrading features and retaining compatibility, until recently where now ARM removed their three 32-bit ISAs from their Cortex-A cores with a loss of compatibility and code density. ARM was improving their code density, adding enhancements and beefing up their performance while Motorola was castrating their beautiful baby before tossing it out with the bath water.

Fully synthesized ColdFire cores used auto layout with no custom circuit layout which is far from optimal for clock speeds and along the lines of what a small fabless semiconductor development team could produce.

MOTOROLA THAWS COLDFIRE V4 (May 15, 2000 Microprocessor Report)

https://www.cecs.uci.edu/~papers/mpr/MPR/2000/20000515/142001.PDF Quote:

The larger caches are the biggest reason that the die didnât shrink dramatically. Another reason is starkly visible in Figure 2, the die photo. ColdFire is the only family of processors from Motorola thatâs entirely synthesized from high-level models with automated design tools. Thereâs no custom circuit layout at all. Compiled chips are bigger, slower, and less power-efficient than full-custom designs, but they are much quicker and cheaper to create. Where a hand-packed design typically has neat blocks of function units inside a Piet Mondrian grid of buses, the 5407 has an amorphous mass of compiler-generated circuits on a Jackson Pollock canvas of silicon. The only semblance of order comes from the caches and on-chip memories around the periphery of the die. Theyâre compiled too, but SRAM arrays obediently fall into dense rows and columns, even without a guiding hand.

Fortunately, the mess of logic circuitry isnât as inefficient as it appears. Based on Motorolaâs upper-range power consumption estimate of 700mW, the 5407 delivers a whopping 367 mips per watt, nearly four times better than the 5307âs 94.6 mips per watt. Beauty is in the eye of the beholder, but performance can be measured.

...

ColdFireâs biggest competition is probably Motorolaâs own 68K, which continues to score new design wins. Thereâs no chance the 5407 will dethrone the 68K. For one thing, the 68K line has processors with true superscalar pipelines, FPUs, and MMUsâvaluable features that Motorola may add to future versions of ColdFire but is withholding for now. Also, as mentioned above, ColdFire isnât completely compatible with the 68K.

To ease the transition to ColdFire, Motorola offers free migration tools on its Web site (www.motorola.com/coldfire). One tool is an emulator that traps unsupported 68K instructions and executes them in software. Another tool is a static translator that converts 68K assembly language into ColdFire assembly language. (Both tools are from MicroAPL, which offers technical support for $500.)

ColdFire is an important part of Motorolaâs FlexCore program, a cell library and design system for ASIC customers. Though ColdFire is not as broadly licensed as the cores from ARC Cores, ARM, Lexra, MIPS, and Tensilica, FlexCore opens the door to custom chips for special applications. In fact, Motorola has designed more ASICs than standard parts using ColdFire cores. Three V4-based ASICs are currently in development, including two that will be manufactured in 0.15- or 0.18-micron processes.

|

The incompatible with the 68k ColdFire divided their embedded market as they tried to kill the 68k for PPC. They succeeded in killing the 68k but most customers fled for ARM with Thumb-2 cores. Motorola tried openly licensing some of their ColdFire cores and even a 68000 core but they were always too little too late. The problem was never the core designs which were often at least competitive but everything else.

Last edited by matthey on 21-Aug-2025 at 04:31 PM.

|

| | Status: Offline |

| |  cdimauro cdimauro

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 21-Aug-2025 18:31:17

| | [ #9 ] |

| |

|

Elite Member

|

Joined: 29-Oct-2012

Posts: 4602

From: Germany | | |

|

| Just a note on the Pentium-4. It was designed with a lot of pipeline stages (20) with some precise goals:

- pushing up the operating frequencies;

- focusing on multimedia / number crunching tasks (read: code which is LESS sensible to branch mispredictions);

- added HyperThreading to better address the cases where the pipeline is stalled, and the backend can be used for performing other tasks.

If you take a look at the benchmarks of the time, it performed not well on the regular code. But it shined on number crunching stuff, and much more when an application was able to use two hardware threads.

Unfortunately, the first goal crashed against the transistors/silicon limits, and Intel had to abandon this microarchitecture (the plan was to reach 10Ghz by 2010). |

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 23-Aug-2025 4:18:22

| | [ #10 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 6704

From: Australia | | |

|

| @bhabbott

Quote:

No, it would be irrelevant.

|

No, it would be relevant like Mac LC 475's 68LC040-25.

Quote:

Commodore made at least two different 486SX-25 PCs. One had similar form factor and appearance to the A4000. It had a DTK motherboard with on-board VGA and riser board for horizontal cards. The other one had a taller case with a 'standard' ISA bus motherboard and VGA card. The only part Commodore made was the case, so they were equivalent to any other clone PC of the day.

|

For 1993 https://www.dosdays.co.uk/topics/1993.php

Commodore UK had Commodore DT486dx-25 (with 4 MB RAM, 52 MB HDD, MS-DOS 5.0, Win3.1, mouse, 14" colour VGA monitor) for £760, which is about AU$1,520.

https://www.youtube.com/watch?v=I32EDXHEEA0

The last Commodore 486DX mini-tower with 486DX4 via Commodore Canada.

Australia's September 1993's 486SX25,

https://i.ibb.co/jV4T43L/1993-QLD-PC-price-list-example.png

486SX-33 (VESA) PC student package for $AU1545

4MB RAM, 1.44FDD, 130 MB HDD, 512K VGA card, SVGA monitor, 2 seral/ 1 lpt, 1 game port, desktop case, 101 keyboard and mouse.

New Zealand's currency and economies of scale are weaker than Australia's.

In Australia, the Amiga wasn't price vs performance competitive in 1992 and 1993.

https://vintageapple.org/pcworld/pdf/PC_World_9306_June_1993.pdf

Gateway Party List, Page 72 of 314

4SX-33 with 486-SX 33Mhz, 4MB RAM, 170 MB HDD, Windows Video accelerator 1MB video DRAM, 14-inch monitor for US$1494,

4DX-33 with 486-DX 33Mhz, 8MB RAM, 212 MB HDD, Windows Video accelerator 1MB video DRAM, 14-inch monitor for US$1895,

Page 128 of 314

Polywell Poly 486-33V with 486SX-33, 4MB of RAM, SVGA 1MB VL-Bus, price: US$1250

https://vintageapple.org/pcworld/pdf/PC_World_9308_August_1993.pdf

Gateway Party List, Page 62 of 324

4SX-33 with 486-SX 33Mhz, 4MB RAM, 212MB HDD, Windows Video accelerator 1MB video DRAM, 14-inch monitor for US$1495,

4DX-33 with 486-DX 33Mhz, 8MB RAM, 212 MB HDD, Windows Video accelerator 1MB video DRAM, 14-inch monitor for US$1795,

Remember Gateway?

Page 292 of 324

From Comtrade

VESA Local Bus WinMax with 32-Bit VL-Bus Video Accelerator 1MB, 486DX2 66 Mhz, 210 MB HDD, 4MB RAM, Price: US$1795

https://vintageapple.org/pcworld/pdf/PC_World_9310_October_1993.pdf

October 1993, Page 13 of 354,

ALR Inc, Model 1 has a Pentium 60-based PC for US$2495.

https://archive.org/details/amiga-world-1993-10/page/n7/mode/2up

Amigaworld, October 1993, Page 66 of 104

Amiga 4000/040 @ 25Mhz for US$2299 (WTF? price close to Pentium PC clone)

Amiga 4000/030 @ 25Mhz for US$1599

Page 82 of 104

M1230X's 68030 @ 50Mhz has US$349

1942 Monitor has US$389

A1200 with 85MB HDD has US$624

A1200 with 130MB HDD has US$724

The Commodore Amiga solution is beaten by the Gateway solution.

For Q4 1993, Apple Mac LC 475 (with 68LC040-25) remains price vs performance competitive with 486SX-33 PC clones.

Don't put your tiny, weak New Zealand experience on the USA or Australia!

Quote:

If you wanted a 486 PC then either of these machines would do the job. An Amiga wouldn't, no matter what 68k CPU it had. It wouldn't run Microsoft Windows, or Word or Excel, or MYOB or QuickBooks, or even Wolfenstein 3D.

|

FakeMac can run the mentioned software on the Amiga. Without gaming priority, my desktop computer would be a Mac.

For example, MYOB. v5.0.1 runs on Macs 68K and PPC.

My EC040 mass production argument is for 3D games against game consoles with poorman's LC040 level CPUs i.e. MIPS R3051 CPU.

Before CD32's release, Wing Commander and Wolfenstein 3D were candidates for a license port. Wing Commander was selected instead of Wolfenstein 3D.

Quote:

It would be completely useless except for running Amiga software.

|

There are fake Mac solutions on the Amiga, but there's a price vs performance issue.

Stop defending Commodore management bullshit.Last edited by Hammer on 23-Aug-2025 at 05:24 AM.

Last edited by Hammer on 23-Aug-2025 at 05:18 AM.

Last edited by Hammer on 23-Aug-2025 at 05:04 AM.

_________________

|

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 23-Aug-2025 4:33:00

| | [ #11 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 6704

From: Australia | | |

|

| @matthey

Quote:

The 68060 die shrinks were likely the minimum rework to move to a new process node. A hint is that there is no record of changes to the transistor count. Intel even performed one of these minimal rework die shrinks once with the P54CQS in 1995.

|

1995 released Pentium Overdrive (P24T's PODP5V63, PODP5V83) implementation had double L1 cache (16KB + 16KB) before Pentium MMX (P55C).

P24T (Pentium Overdrive 63 and 83 Mhz), P54CQS (Pentium 120 Mhz), P54CS (Pentium 133 Mhz), and P6 (Pentium Pro 150 to 200Mhz) SKUs were released in 1995.

Quote:

I'm already aware of GunnarVB's post in NXP's forum.

TomE: Nice idea, just 20 to 30 years too late.

GunnarVB's effort is effectively a 68K/Coldfire CPU-compatible cloner that competes directly against NXP's self-interest. This amounts to an AMD employee posting in Intel's forums.

AMD-Intel discussions on X86 architecture are on the X86 Ecosystem Advisory Group.

Besides AMD and Intel, the X86 Ecosystem Advisory Group also includes Dell, Broadcom, Google Cloud, HP, HPE, Lenovo, Meta, Microsoft, Oracle, and Red Hat. The luminaries include Linus Torvalds, the creator of Linux, and Tim Sweeney of Epic Games.

https://www.techpowerup.com/327755/what-the-intel-amd-x86-ecosystem-advisory-group-is-and-what-its-not

Quote:

ColdFire had already added back a large portion of 68k support and Gunnar suggested the cost was low to add back more.

He suggests that the max clock speed differences between ColdFire and 68k cores are not only minimal but that the 68060 6 bytes/cycle instruction superscalar execution limitation could easily be increased to 8 bytes/cycle significantly increasing the already very good 68060 multi-issue rate. This is based on timing in a FPGA which is more challenging than for an ASIC. Gunnar's data suggests that it was never worthwhile to drop 68k compatibility for ColdFire and the loss of compatibility and downgrade of features, performance and code density were major reason why Motorola lost the market to ARM with Thumb-2 which was upgrading features and retaining compatibility, until recently where now ARM removed their three 32-bit ISAs from their Cortex-A cores with a loss of compatibility and code density. ARM was improving their code density, adding enhancements and beefing up their performance while Motorola was castrating their beautiful baby before tossing it out with the bath water.

|

NXP (with STM partner) added 16-bit VLE with PowerPC e200 series.

https://www.nxp.com/docs/en/reference-manual/VLEPEM.pdf

With 16-bit VLE for PowerPC, NXP/STM is not returning to 68K.

Last edited by Hammer on 23-Aug-2025 at 04:50 AM.

Last edited by Hammer on 23-Aug-2025 at 04:39 AM.

_________________

|

| | Status: Offline |

| |  cdimauro cdimauro

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 23-Aug-2025 5:07:03

| | [ #12 ] |

| |

|

Elite Member

|

Joined: 29-Oct-2012

Posts: 4602

From: Germany | | |

|

| @Hammer

Quote:

You continue to report it, but there's not a single benchmark using this VLE for embedded (and solely there, it looks like).

That's despite I've already asked you several times.

Since there's nothing yet, I wonder who is using it. If anyone ever did it... |

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 23-Aug-2025 5:35:22

| | [ #13 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 6704

From: Australia | | |

|

| @cdimauro

Quote:

cdimauro wrote:

@Hammer

You continue to report it, but there's not a single benchmark using this VLE for embedded (and solely there, it looks like).

That's despite I've already asked you several times.

Since there's nothing yet, I wonder who is using it. If anyone ever did it...

|

1. I don't care about NXP/STM's PowerPC VLE vs 68K. PPC fanboys can cover their CPU horse.

2. The FACT remains that NXP/STM's pro-PowerPC VLE position shows they will not return to 68K. This is corporate policy. You have a problem with the corporate directive.

3. For VLE PPC, NXP/STM claims 30 percent code density improvement.

4. 68040's and 68060's designs are locked up in NXP's licensing policies; refer to Rochester Electronics's 68040 license from NXP.

As with CISC, 68K's fused data loads with ALU operation are simple enough to understand, and there are other methods for fusing multiple operations into one instruction.

.

Last edited by Hammer on 23-Aug-2025 at 05:38 AM.

_________________

|

| | Status: Offline |

| |  cdimauro cdimauro

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 23-Aug-2025 5:51:41

| | [ #14 ] |

| |

|

Elite Member

|

Joined: 29-Oct-2012

Posts: 4602

From: Germany | | |

|

| @Hammer

Quote:

Hammer wrote:

@cdimauro

Quote:

cdimauro wrote:

@Hammer

You continue to report it, but there's not a single benchmark using this VLE for embedded (and solely there, it looks like).

That's despite I've already asked you several times.

Since there's nothing yet, I wonder who is using it. If anyone ever did it...

|

1. I don't care about NXP/STM's PowerPC VLE vs 68K. PPC fanboys can cover their CPU horse. |

I haven't talked about PowerPC vs 68k: I've ONLY talked about VLE.

You reported it already several times, in the context of code density, yet there's not a single number baking any credibility of this PowerPC extension about this key metric (which is THE key metric when talking about embedded. In fact, it's an extension for the embedded).

BTW, I've just started reading this manual, and I've immediately found something which made me laugh. Those are another set of engineers which were living on a parallel world.

I leave you as an exercise to figure out what I was talking about. Hint: it's at the very beginning of the documentation.

Quote:

| 2. The FACT remains that NXP/STM's pro-PowerPC VLE position shows they will not return to 68K. This is corporate policy. You have a problem with the corporate directive. |

Irrelevant.

Quote:

| 3. For VLE PPC, NXP/STM claims 30 percent code density improvement. |

Source?

Quote:

| 4. 68040's and 68060's designs are locked up in NXP's licensing policies; refer to Rochester Electronics's 68040 license from NXP. |

Irrelevant.

Quote:

| As with CISC, 68K's fused data loads with ALU operation are simple enough to understand, and there are other methods for fusing multiple operations into one instruction |

Irrelevant. |

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 26-Aug-2025 5:33:18

| | [ #15 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 6704

From: Australia | | |

|

| @cdimauro

Quote:

Relevant.

Quote:

https://www.nxp.com/docs/en/supporting-information/VLEPIM.pdf

Page 25 of 56

Reduce overall code size by 30 percent over existing PowerPC text segments

Quote:

Relevant.

Quote:

Relevant.

Code density is relevant for the intended use case. The embedded MPU use case is irrelevant for the Amiga (this website) i.e. the Amiga is a desktop computer that targets desktop games.

Last edited by Hammer on 26-Aug-2025 at 05:42 AM.

Last edited by Hammer on 26-Aug-2025 at 05:38 AM.

_________________

|

| | Status: Offline |

| |  matthey matthey

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 26-Aug-2025 19:56:30

| | [ #16 ] |

| |

|

Elite Member

|

Joined: 14-Mar-2007

Posts: 2840

From: Kansas | | |

|

| cdimauro Quote:

Just a note on the Pentium-4. It was designed with a lot of pipeline stages (20) with some precise goals:

- pushing up the operating frequencies;

- focusing on multimedia / number crunching tasks (read: code which is LESS sensible to branch mispredictions);

- added HyperThreading to better address the cases where the pipeline is stalled, and the backend can be used for performing other tasks.

If you take a look at the benchmarks of the time, it performed not well on the regular code. But it shined on number crunching stuff, and much more when an application was able to use two hardware threads.

Unfortunately, the first goal crashed against the transistors/silicon limits, and Intel had to abandon this microarchitecture (the plan was to reach 10Ghz by 2010).

|

Pentium 4 designs increased from 20-stage to 31-stage to further increase the max clock speed. Bad for general purpose code with branches but good for SIMD use with few branches. This is sometimes called a throughput or multimedia processor. Similar in philosophy designs follow.

2005 The superscalar in-order ARM Cortex-A8 used a 13-stage pipeline which does not sound so bad but it did not have hardware multi-threading for stalls. Performance was improved for SIMD/multimedia use but general purpose use suffered and the more practical in-order 8-stage Cortex-A7 replaced it.

2006 The superscalar in-order IBM PPC Cell@3.2GHz with a 23-stage pipeline has a similar philosophy except the general purpose PPC CPU core was stripped down more to a very simple in-order two issue design with two hardware threads while independent SIMD units were added. General purpose performance was worse than the Pentium 4 with some benchmarks showing performance to be equivalent to a PPC core operating at roughly half of the Cell clock speed.

2008 The superscalar in-order Intel Atom "Bonnell" had a 16-19 stage pipeline. It supports a relatively high clock speed and two hardware threads for modest general purpose performance with better SIMD/multimedia performance.

2010 The cancelled Intel Larrabee GPGPU project was to use many simple superscalar in-order x86 CPU cores like the original P5 Pentium but with powerful SIMD unit enhancements. The very wide 512b SIMD limited core clock speeds and CPU cores with SIMD units were larger than SIMD units by themselves which is roughly what unified shaders are (Cell SPEs were capable of GPU workloads but there were not enough cores and they lacked some GPU specialized support). From 24-48 cores were required for GPU rendering at the time and these in-order CPU cores could double as e-cores but OoO p-cores would still be desirable for desktop competitiveness. It is surprising that competitors did not try similar projects with slimmer cores as even the 68060 has ~20% smaller cores, probably more if adjusting for the deeper 68060 pipeline. Smaller cores leave more transistors available for more cores which improves scaling. Larrabee is somewhat different in that it did not target extreme clock speeds with a shallower pipeline and supported 4 hardware threads per core. They tried to increase parallelism without extreme clock speeds which is more like GPU unified shaders.

More instruction pipeline stages use more transistors as between each stage there are latches/registers that hold data from the previous stage. While instruction level parallelism (ILP) and max clock speed increase with deeper pipelines, instruction latency increases a small amount with each stage and the length of stalls like branch and load-to-use stalls usually increase in cycles making programming more difficult. This is just educational information related to the thread and not directed at anyone in particular.

Hammer Quote:

I'm already aware of GunnarVB's post in NXP's forum.

TomE: Nice idea, just 20 to 30 years too late.

GunnarVB's effort is effectively a 68K/Coldfire CPU-compatible cloner that competes directly against NXP's self-interest. This amounts to an AMD employee posting in Intel's forums.

|

I wonder where the idea of a 68k/ColdFire compatible CPU came from and who pushed Gunnar to implement ColdFire instructions like MVS/MVZ before Gunnar removed them for his wonky ISA only to change his ISA and reenable them in AC68080 cores.

NXP has no "self-interest" in anything 68k or ColdFire related. Large businesses often pursue larger and higher margin markets while licensing to and ignoring smaller and lower margin markets that can provide opportunities for smaller businesses. Rochester Electronics is an example of a smaller business that provides a service for chip producers like Motorola/Freescale/NXP, They are more likely considered a business partner rather than a competitor and their license likely generates revenue for NXP. The same is true for Silvaco who licenses and sublicense ColdFire cores likely generating revenue for NXP. It is true that the 68k and ColdFire are off NXPs radar right now. Speaking of AMD, the x86 was off Intel's radar as they developed Itanium until AMD showed there was a market for it. Bill Mensch did not find interest from larger chip producers in developing a CMOS 6502 until he developed the 65C02 himself which sold 25 million chips per year and resulted in Commodore suing him and using the design themselves.

WDC Carries 6502 Flag into New Arenas

https://www.cecs.uci.edu/~papers/mpr/MPR/ARTICLES/080903.pdf

Freescale/NXP is interested in professionally developed high code density ISAs as they licensed cores using the BA2 ISA for some of their chips. BA2 is likely only 5%-15% better code density than their 68k and ColdFire ISAs where they already own cores and may be no better code density than a 68k/ColdFire compatible ISA. Freescale/NXP does not seem to do ISA development anymore usually choosing ARM ala carte and paying the ARM IP tax which is easy and they are satisfied with their margins partially based on existing sales channels and reputation. They basically just develop SoCs and MCUs from primarily ARM IP. After their organically developed 68k and 88k were tossed by upper management and many engineers left Motorola, they were never the same, especially in regards to ISA development.

Hammer Quote:

PPC is just as dead to NXP as the 68k and ColdFire. The problem with PPC VLE is how late it was in 2006 long after Thumb-2 was established. It was more for lower end embedded cores where Thumb-2 did not have the performance so automotive. The other PPC embedded niche was telecommunication which tended to use more powerful cores without VLE. It never had good developer support as it was not standard or compatible with the existing PPC ISA. The 68k and ColdFire have the advantage that they were much earlier with compressed ISAs, compression was standard, they were much more popular with large libraries of 68k and ColdFire code and there is a larger market for hardware replacements.

Not only was Motorola/Freescale/NXP too little too late for the compressed 16-bit and 32-bit RISC market with Thumb-2 and MicroMIPS among top competitors but they were too little too late for the 16-bit RISC market with their MCore ISA in 1997 to compete against SuperH, Thumb and MIPS16. This was despite the fact that they created ColdFire with 16-bit, 32-bit and 48-bit VLE able to encode 32-bit immediates/displacements in a single instruction instead of multiple dependent instructions with a loss of performance. They did not exhibit an understanding of their ISAs and their advantages and disadvantages, had too many ISAs instead of standardizing on and improving the best ones and in general showed a lack of leadership with their ISAs. MCore turned into so much of an embarrassment that Motorola/Freescale tried to erase its existence. Most ISA manuals all the way back to the 6800 and 68000 are on the NXP website. Where is the following documentation describing the MCore encodings on the NXP website?

MCore microRISC Engine Programmers Reference Manual Revision 1.0

https://web.archive.org/web/20160304090032/http://www.saladeteletipos.com/pub/SistemasEmbebidos2006/PlacaMotorola/mcore_rm_1.pdf

The 68k, ColdFire and PPC VLE are off the radar of NXP but at least they are not ashamed of them.

Last edited by matthey on 26-Aug-2025 at 09:33 PM.

Last edited by matthey on 26-Aug-2025 at 08:10 PM.

Last edited by matthey on 26-Aug-2025 at 08:04 PM.

|

| | Status: Offline |

| |  MEGA_RJ_MICAL MEGA_RJ_MICAL

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 28-Aug-2025 4:04:44

| | [ #17 ] |

| |

|

Super Member

|

Joined: 13-Dec-2019

Posts: 1409

From: AMIGAWORLD.NET WAS ORIGINALLY FOUNDED BY DAVID DOYLE | | |

|

| Quote:

matthey wrote:

cdimauro Quote:

Just a note on the Pentium-4. It was designed with a lot of pipeline stages (20) with some precise goals:

- pushing up the operating frequencies;

- focusing on multimedia / number crunching tasks (read: code which is LESS sensible to branch mispredictions);

- added HyperThreading to better address the cases where the pipeline is stalled, and the backend can be used for performing other tasks.

If you take a look at the benchmarks of the time, it performed not well on the regular code. But it shined on number crunching stuff, and much more when an application was able to use two hardware threads.

Unfortunately, the first goal crashed against the transistors/silicon limits, and Intel had to abandon this microarchitecture (the plan was to reach 10Ghz by 2010).

|

Pentium 4 designs increased from 20-stage to 31-stage to further increase the max clock speed. Bad for general purpose code with branches but good for SIMD use with few branches. This is sometimes called a throughput or multimedia processor. Similar in philosophy designs follow.

2005 The superscalar in-order ARM Cortex-A8 used a 13-stage pipeline which does not sound so bad but it did not have hardware multi-threading for stalls. Performance was improved for SIMD/multimedia use but general purpose use suffered and the more practical in-order 8-stage Cortex-A7 replaced it.

2006 The superscalar in-order IBM PPC Cell@3.2GHz with a 23-stage pipeline has a similar philosophy except the general purpose PPC CPU core was stripped down more to a very simple in-order two issue design with two hardware threads while independent SIMD units were added. General purpose performance was worse than the Pentium 4 with some benchmarks showing performance to be equivalent to a PPC core operating at roughly half of the Cell clock speed.

2008 The superscalar in-order Intel Atom "Bonnell" had a 16-19 stage pipeline. It supports a relatively high clock speed and two hardware threads for modest general purpose performance with better SIMD/multimedia performance.

2010 The cancelled Intel Larrabee GPGPU project was to use many simple superscalar in-order x86 CPU cores like the original P5 Pentium but with powerful SIMD unit enhancements. The very wide 512b SIMD limited core clock speeds and CPU cores with SIMD units were larger than SIMD units by themselves which is roughly what unified shaders are (Cell SPEs were capable of GPU workloads but there were not enough cores and they lacked some GPU specialized support). From 24-48 cores were required for GPU rendering at the time and these in-order CPU cores could double as e-cores but OoO p-cores would still be desirable for desktop competitiveness. It is surprising that competitors did not try similar projects with slimmer cores as even the 68060 has ~20% smaller cores, probably more if adjusting for the deeper 68060 pipeline. Smaller cores leave more transistors available for more cores which improves scaling. Larrabee is somewhat different in that it did not target extreme clock speeds with a shallower pipeline and supported 4 hardware threads per core. They tried to increase parallelism without extreme clock speeds which is more like GPU unified shaders.

More instruction pipeline stages use more transistors as between each stage there are latches/registers that hold data from the previous stage. While instruction level parallelism (ILP) and max clock speed increase with deeper pipelines, instruction latency increases a small amount with each stage and the length of stalls like branch and load-to-use stalls usually increase in cycles making programming more difficult. This is just educational information related to the thread and not directed at anyone in particular.

Hammer Quote:

I'm already aware of GunnarVB's post in NXP's forum.

TomE: Nice idea, just 20 to 30 years too late.

GunnarVB's effort is effectively a 68K/Coldfire CPU-compatible cloner that competes directly against NXP's self-interest. This amounts to an AMD employee posting in Intel's forums.

|

I wonder where the idea of a 68k/ColdFire compatible CPU came from and who pushed Gunnar to implement ColdFire instructions like MVS/MVZ before Gunnar removed them for his wonky ISA only to change his ISA and reenable them in AC68080 cores.

NXP has no "self-interest" in anything 68k or ColdFire related. Large businesses often pursue larger and higher margin markets while licensing to and ignoring smaller and lower margin markets that can provide opportunities for smaller businesses. Rochester Electronics is an example of a smaller business that provides a service for chip producers like Motorola/Freescale/NXP, They are more likely considered a business partner rather than a competitor and their license likely generates revenue for NXP. The same is true for Silvaco who licenses and sublicense ColdFire cores likely generating revenue for NXP. It is true that the 68k and ColdFire are off NXPs radar right now. Speaking of AMD, the x86 was off Intel's radar as they developed Itanium until AMD showed there was a market for it. Bill Mensch did not find interest from larger chip producers in developing a CMOS 6502 until he developed the 65C02 himself which sold 25 million chips per year and resulted in Commodore suing him and using the design themselves.

WDC Carries 6502 Flag into New Arenas

https://www.cecs.uci.edu/~papers/mpr/MPR/ARTICLES/080903.pdf

Freescale/NXP is interested in professionally developed high code density ISAs as they licensed cores using the BA2 ISA for some of their chips. BA2 is likely only 5%-15% better code density than their 68k and ColdFire ISAs where they already own cores and may be no better code density than a 68k/ColdFire compatible ISA. Freescale/NXP does not seem to do ISA development anymore usually choosing ARM ala carte and paying the ARM IP tax which is easy and they are satisfied with their margins partially based on existing sales channels and reputation. They basically just develop SoCs and MCUs from primarily ARM IP. After their organically developed 68k and 88k were tossed by upper management and many engineers left Motorola, they were never the same, especially in regards to ISA development.

Hammer Quote:

PPC is just as dead to NXP as the 68k and ColdFire. The problem with PPC VLE is how late it was in 2006 long after Thumb-2 was established. It was more for lower end embedded cores where Thumb-2 did not have the performance so automotive. The other PPC embedded niche was telecommunication which tended to use more powerful cores without VLE. It never had good developer support as it was not standard or compatible with the existing PPC ISA. The 68k and ColdFire have the advantage that they were much earlier with compressed ISAs, compression was standard, they were much more popular with large libraries of 68k and ColdFire code and there is a larger market for hardware replacements.

Not only was Motorola/Freescale/NXP too little too late for the compressed 16-bit and 32-bit RISC market with Thumb-2 and MicroMIPS among top competitors but they were too little too late for the 16-bit RISC market with their MCore ISA in 1997 to compete against SuperH, Thumb and MIPS16. This was despite the fact that they created ColdFire with 16-bit, 32-bit and 48-bit VLE able to encode 32-bit immediates/displacements in a single instruction instead of multiple dependent instructions with a loss of performance. They did not exhibit an understanding of their ISAs and their advantages and disadvantages, had too many ISAs instead of standardizing on and improving the best ones and in general showed a lack of leadership with their ISAs. MCore turned into so much of an embarrassment that Motorola/Freescale tried to erase its existence. Most ISA manuals all the way back to the 6800 and 68000 are on the NXP website. Where is the following documentation describing the MCore encodings on the NXP website?

MCore microRISC Engine Programmers Reference Manual Revision 1.0

https://web.archive.org/web/20160304090032/http://www.saladeteletipos.com/pub/SistemasEmbebidos2006/PlacaMotorola/mcore_rm_1.pdf

The 68k, ColdFire and PPC VLE are off the radar of NXP but at least they are not ashamed of them.

|

said the retail pusher of agricultural tires

_________________

I HAVE ABS OF STEEL

--

CAN YOU SEE ME? CAN YOU HEAR ME? OK FOR WORK |

| | Status: Offline |

| |  Hammer Hammer

|  |

Re: CPU instruction pipelinining, clock speeds and the Megahertz Myth

Posted on 28-Aug-2025 5:22:45

| | [ #18 ] |

| |

|

Elite Member

|

Joined: 9-Mar-2003

Posts: 6704

From: Australia | | |

|

| @matthey

Quote:

Pentium 4 designs increased from 20-stage to 31-stage to further increase the max clock speed. Bad for general purpose code with branches but good for SIMD use with few branches. This is sometimes called a throughput or multimedia processor. Similar in philosophy designs follow.

|

https://www.agner.org/optimize/microarchitecture.pdf

The average misprediction penalty was measured to approximately 18 clock cycles for Zen

1-3. The misprediction penalty varies from 15 - 18 in Zen 4 and 15 - 25 in Zen 5.

Misprediction penalty reveals pipeline length.

AM4 socket's Zen 1 to 3 pipeline depth is about 15 to 18. The current PlayStation 5 and Xbox Series S/X game consoles feature Zen 2-based architecture.

AM5 socket's Zen 4 to 5 pipeline depth is about 15 to 25. Zen 4 has a higher clock speed and improved predication hardware when compared to Zen 3.

https://overclock3d.net/news/cpu_mainboard/amd-7-ghz-zen-6-clock-speed-target-confirmed-leaker-claims/

AMD's Zen 6 aims for 7 Ghz.

A long pipeline length is reasonable when the predication hardware's capability scales with it.

Quote:

NXP has no "self-interest" in anything 68k or ColdFire related

|

NXP has self-interest, e.g. NXP hasn't open-sourced the old 68040 and 68060 designs.

NXP has licensed its 68040 design to Rochester Electronics. A license with conditions enables NXP to have a measure of control over its 68040 IP.

Quote:

It is true that the 68k and ColdFire are off NXPs radar right now. Speaking of AMD, the x86 was off Intel's radar as they developed Itanium until AMD showed there was a market for it.

|

Nope. Project Yamhill (EMT64) shows Intel's "only the paranoid survive" mindset. The Yamhill project served as a crucial "fallback" if Intel's primary 64-bit initiative, the Itanium processor, proved unsuccessful.

Quote:

Bill Mensch did not find interest from larger chip producers in developing a CMOS 6502 until he developed the 65C02 himself which sold 25 million chips per year and resulted in Commodore suing him and using the design themselves.

|

Commodore designs its own CMOS 6502 variant as 55C02 and 4502.

From Commodore - The Final Years book

Commodore had purchased the rights from the Western Design

Center (WDC) to produce Bill Menschâs 65C02 for half price and had

plans to use the chips in the LCD computer. However, there were

restrictions. âWe had the Western Design Centerâs database for the

65SC02, but it had with it stipulations that it could not be sold as a

stand-alone part,â says Gardei. If CSG wanted to sell the CMOS chips

to Atari or other customers, it would have to design its own.

Gardei also wanted the ability to customize the chips. âWestern

Design Center did not have what we wanted,â he says. âWe wanted

flexibility and the capability of doing anything we wanted with our

own core.â

Commodoreâs Large Scale Integration department, headed by Bob

Olah, began preliminary investigations in July 1985 into a CMOS

6502 chip which Lenthe called the 55C02.

Western Design Center's 65SC02 has license restrictions that are incompatible with CSG's management direction.

Continuing Commodore - The Final Years book

Lenthe gave no schedule for the project, due to the

recent cancellation of the LCD computer, and by December 1985

Commodore scrapped the project.

It seemed like the project was dead, until a new recruit decided to

take it on himself. Bill Gardei had worked for the LSI group since the

beginning of the year. âI was interviewed and hired by Bob Olah in

February of 1985,â he says.

On his own, he decided to resurrect the 5502 project, this time

using 2 micron CMOS. He dubbed the new chip the 4502. âAll new

CMOS custom chips were given the series number of 4000 and up,

so 4502 was the obvious choice,â he says. âThe 4502 core

development started in late 1985. The original designers were

myself and Charles Hauck.â

(skip)

Gardei was not content to just copy the 6502 and make a CMOS

version. Rather, he had upgraded it. âThe 4502 was an improved

6502 core, which had some or all of the GTE extensions, and some

extensions that Hedley Davis came up with,â says Dave Haynie.

The GTE extensions consisted of additional opcodes. âThe

extensions were new opcodes that the original 6502 and 6510 did

not have,â says Gardei. âAdding instructions to a CPU makes it more

flexible and more powerful.â

By early August 1987, the design was complete, and Gardei handed

it off to other engineers within Commodoreâs LSI group. For the next

several months, they would create a chip layout and finally

manufacture samples of the chip.

(skip)

Marketing renamed the 4502 the 65CE02, and it

would soon find its way into some Amiga 2000 boards.

CSG 45xx was used in various products.

Wiki's https://en.wikipedia.org/wiki/CSG_65CE02 is not accurate.